The Laws of Startup Physics Have Changed: Ben Horowitz on Investing and Entrepreneurship in the AI Era

Guest: Ben Horowitz, co-founder of a16z (Andreessen Horowitz), author of The Hard Thing About Hard Things Source: Why The Laws of Startup Physics Have Changed | Duration: 62 minutes Full transcript: Complete transcript with speaker identification

Introduction

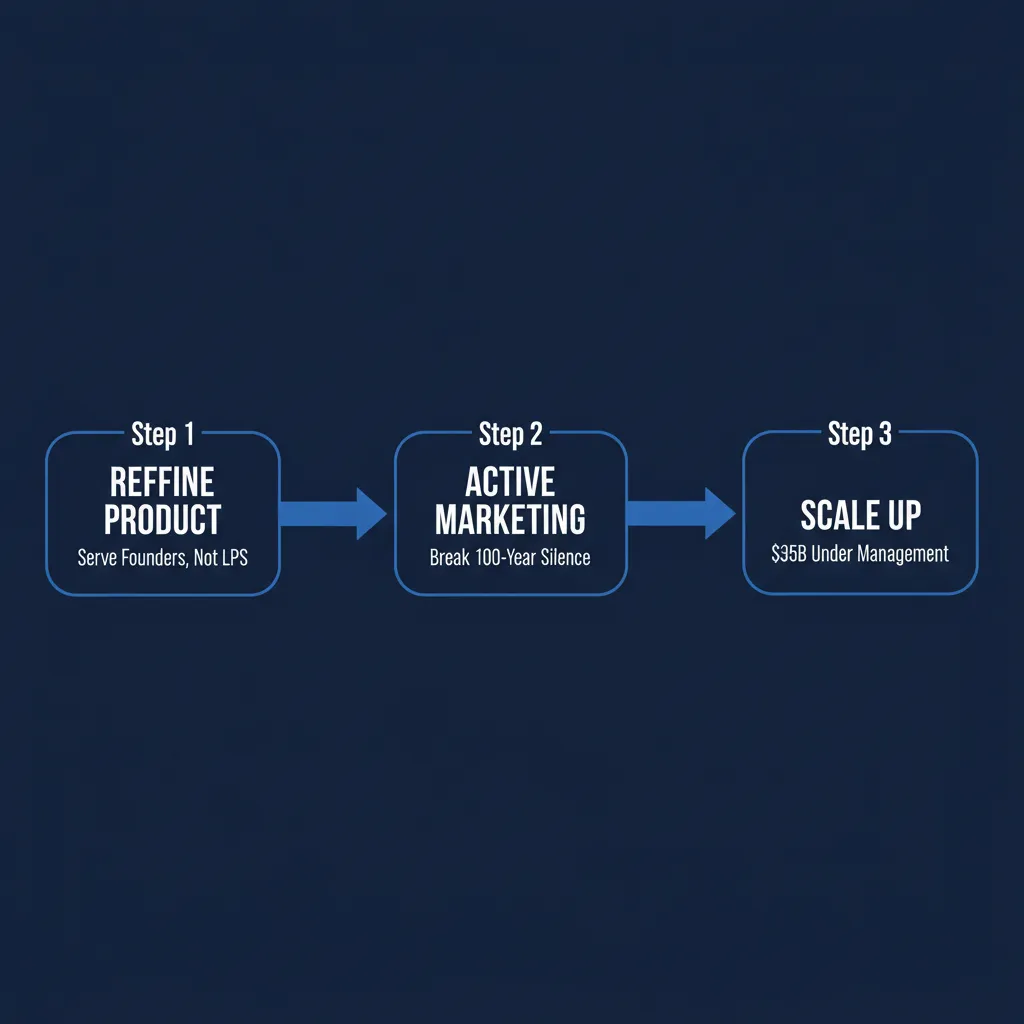

When Ben Horowitz and Marc Andreessen founded a16z in 2009, no new top-tier venture capital firm had emerged in three decades. Seventeen years later, a16z manages over $35 billion in assets, covering nearly every technology sector from crypto to biotech, and has become one of Silicon Valley’s most influential investment institutions.

In this Invest Like The Best interview, Horowitz goes beyond investment advice. He puts forward a bigger thesis: AI is changing the fundamental physics of the startup game. For the past fifteen years, “a small team builds a great product and a big company can’t catch them no matter how much money they throw at it” has been an iron law of the startup world. Now that iron law is being broken.

Three core takeaways from this episode:

- The changing physics of startup building — Why having GPUs and data lets you catch anyone, and what this means for investing and entrepreneurship

- Inequality is a feature, not a bug — Horowitz’s provocative take on AI’s social impact

- From Bushido to corporate culture — The management philosophy that “culture is not a set of ideas, it’s a set of actions”

“With Data and GPUs, You Can Solve Damn Near Anything”

If you’ve ever built a software company, you know one unshakable rule: you cannot throw money at a technology problem. A great product built by a three-person team over three years cannot be caught by Google hiring two thousand engineers. This was the entrepreneur’s ultimate moat, and the fundamental reason VCs were willing to bet early.

Horowitz believes this iron law has broken down.

“Now if you have the data and you have enough GPUs, you can solve damn near anything.”

He cites Elon Musk’s entry into the large model competition: with massive capital, excellent data center design, and a group of smart engineers, Musk entered the first tier of the AI race in remarkably short time. “That would have never happened in the past.”

This shift cuts both ways for investors. On one hand, AI companies are growing revenue at unprecedented speed. Cursor, ostensibly an IDE tool, reached revenue levels that previous products in the same category might have needed over a decade to achieve. On the other hand, moats have grown shallower — if someone can catch you quickly with capital, how much is a first-mover advantage really worth?

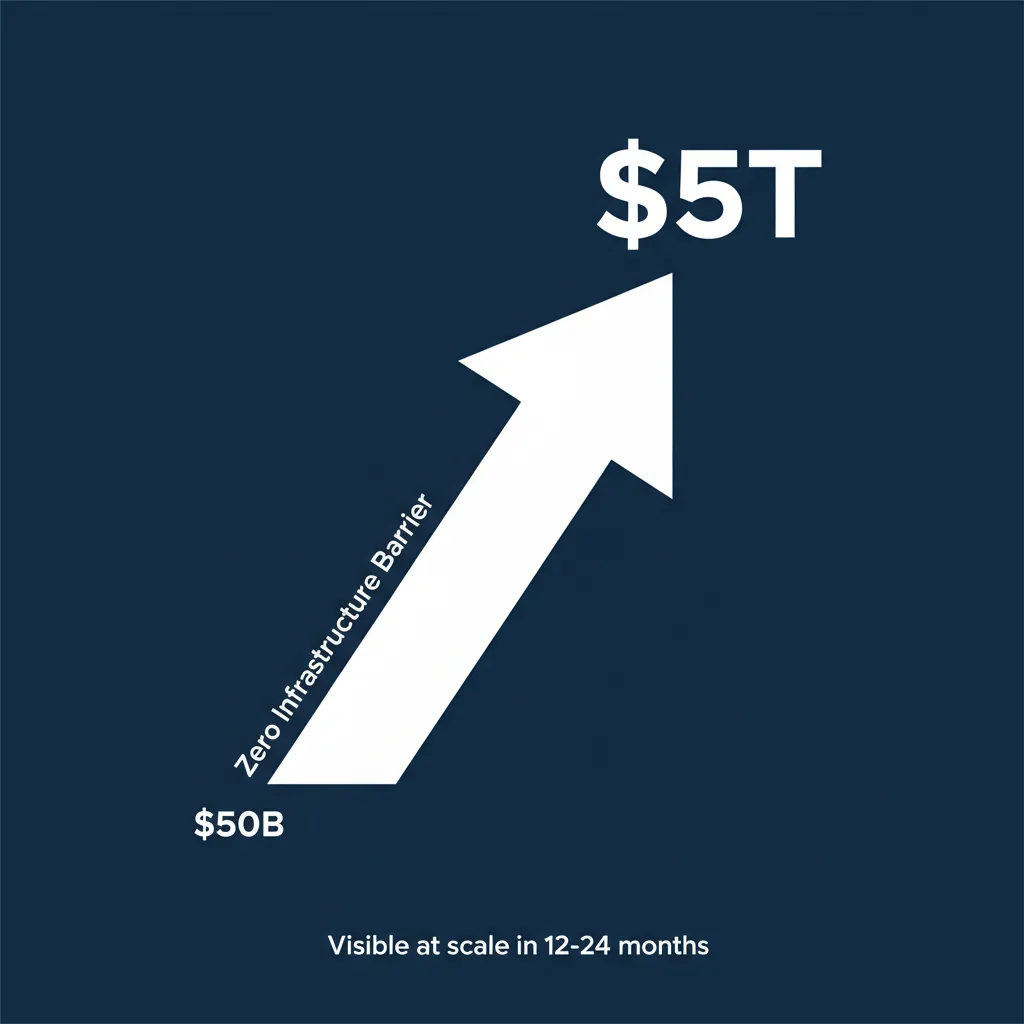

“These are concepts we’ve not dealt with,” Horowitz says. “On the one hand, what if this market wasn’t $50 billion — what if it was $5 trillion? And on the other hand, what if somebody could catch you?”

For entrepreneurs, this means shorter windows but larger addressable markets. For investors, past valuation models may need fundamental rethinking.

Market Size: From $50 Billion to $5 Trillion

Horowitz repeatedly emphasizes an underappreciated fact: deploying AI requires no new infrastructure.

Cars needed roads and traffic lights. The internet needed fiber in the ground and smartphone adoption. These infrastructure build-out cycles meant decades between invention and mass adoption. AI is different — the internet is already here. If you want to apply AI to your business, “you just do it.”

This zero-infrastructure barrier has two consequences. First, technology penetration is far faster than any previous revolution — Horowitz expects the impact to become broadly visible within 12 to 24 months. Second, the potential market size dwarfs historical software markets.

“There’s almost no problem you can’t address with AI,” he says. “Education? We’ve got an AI solution. Cancer? We’ve got an AI solution. That’s a real new phenomenon.”

On the investment side, this has created a new challenge: the extreme scarcity of AI researchers. Horowitz describes the field as almost “alchemistic” — if you haven’t led large model training at Google, Facebook, OpenAI, or Anthropic, you probably don’t know how to do it, because schools can’t teach this. There may be only 40 people in the world who truly have this capability.

“It’s the first time we’ve had a need for a technologist that academia couldn’t produce,” Horowitz says. When your company has a $4 trillion market cap and only 40 people in the world can do the job, spending $100 million to recruit one researcher starts to make mathematical sense.

Inequality Is a Feature, Not a Bug

The most controversial take in the interview comes during the discussion of AI’s impact on social inequality.

Horowitz uses what he calls the “Kobe Bryant effect” to explain how technology amplifies inequality. When basketball was invented, a player’s income was limited to however many spectators could show up. Television and global broadcasting made it possible for LeBron James to become a billionaire. The internet gave entrepreneurs global distribution, and AI layers on top of that — “whatever the Internet company was, plus plus plus.”

But his core argument is that this inequality isn’t a bad thing, because AI is simultaneously the greatest equalizer of opportunity in history.

“Every child can have a super advanced amazing tutor teacher. Great education is accessible to all now.”

Horowitz explicitly rejects the “permanent underclass” narrative popular on Twitter. He draws an analogy to crypto — many who made fortunes in the space started with virtually no capital; they simply entered a high-growth field early. “If you bought Bitcoin for a nickel, you did really well, and all you needed was a nickel.”

His rebuttal to predictions of AI-driven mass unemployment is equally direct:

“ImageNet was 2012, ChatGPT was 2022. Where’s all the job destruction? Why hasn’t it happened yet? And why are you so fucking sure it’s going to happen next? And why are you so sure no jobs are going to be created?”

This rebuttal is forceful but has a blind spot. Agricultural automation took over a century to complete the employment transition, while AI’s replacement of cognitive work could happen within a single generation — the timescales are fundamentally different.

“A Bad Government Can Ruin the Whole Thing”

Horowitz’s political-economic views have a clear intellectual origin: his father.

His grandparents were Communist Party members, fired during the McCarthy era for their affiliation. His father, David Horowitz, was editor of the famous new-left magazine Ramparts, involved with the Black Panthers, and later made a complete turn to the right. This family history shaped Horowitz’s deep skepticism of government intervention.

He recounts his father’s words:

“Go to the library, pick any book on socialism. There’s hundreds of books. I guarantee you, you will find page upon page, chapter upon chapter, of how to divide the wealth. You will not find a single sentence on how to create it.”

This framework runs through all his policy judgments. He argues that technology solutions nearly always outperform policy solutions — COVID lockdowns had limited effectiveness and massive side effects, but vaccines worked; climate policies reduced emissions in Europe but were offset by China’s increases, while nuclear fusion could actually solve the problem; “defund the police” made nobody safer, but technology did.

On specifics, he criticized the Biden administration’s AI executive order, claiming it included provisions requiring federal government approval to sell GPUs. “We were that close to being basically out of the global chip game.”

This perspective has internal logical consistency, but also notable selectivity: he doesn’t discuss the massive fraud that occurred in the largely unregulated crypto market, nor does he mention the view held by many AI safety researchers that proactive regulation is essential.

Building a Top-Tier VC from Scratch

The middle portion of the interview offers a remarkably candid founding story.

In 2009, Horowitz and Andreessen faced a seemingly impossible challenge: since Benchmark in 1995, no new venture firm had reached top-tier status. The reason was a self-reinforcing cycle — top-tier VCs derived their reputation from having invested in Apple, Cisco, and Google; new firms had no such track record, so the best entrepreneurs wouldn’t take their money, which meant they could never build a track record.

Their approach was to redefine the product: traditional VCs were a great product for LPs (limited partners) but not for entrepreneurs. If they could build a better product for founders — providing confidence, network, advice, and helping them become capable CEOs — they could break the cycle.

Another innovation was proactive marketing. Traditional VCs never spoke publicly, because reputation was built on investment returns and mystique was preferable. When Marc Andreessen asked “Why don’t VCs market?” they discovered this tradition traced back to JP Morgan and Rothschild — Industrial Revolution financiers who funded both sides of World War I and naturally wanted no publicity. This inertia had persisted for a century.

Horowitz also candidly acknowledged early mistakes. They over-indexed on requiring GPs to have founding experience, only to discover that “most CEOs aren’t as interested in investing as they think they are.” Fund two underperformed fund one, and fund three was alarming for a while — though it ultimately performed well thanks to Coinbase, Databricks, GitHub, and other investments.

He explicitly rejected the temptation of PE-style AI roll-ups. Despite being a good business idea (buying traditional companies and optimizing them with AI), it was “the cultural opposite of who we are.” He cited a dinner with Apollo’s Mark Rowan: “He was like, entry price, entry price, entry price. We never even think about that.”

Culture Is Not a Set of Ideas, It’s a Set of Actions

Horowitz’s discussion of management and culture is the most information-dense segment of the interview.

He calls Andy Grove (former Intel CEO) his most important mentor and tells a story: on Grove’s office wall hung an award — Manager of the Year for Intel’s Santa Clara facility. This plant had been Intel’s lowest-scoring facility. Grove walked in, listened to management’s excuses, then pulled out a roll of toilet paper from under his chair and said: “Clean up your bullshit and tell me when the fuck you’re going to be up to code.” Two months later, they were up to code — and remained the highest-rated facility thereafter.

This story leads to Horowitz’s core thesis on “confrontational management”: the concepts of management are simple — an eighth-grade education suffices — but the psychological dimension is extraordinarily difficult. The most common failure mode for young founders is losing confidence after making mistakes, beginning to hesitate, then over-relying on input from their team. But the team lacks the full context. Even if they’re smarter, the leader still likely has better judgment because they have all the information.

The hardest scenario is organizational restructuring. “Somebody really good, who you’ve had for a long time, is going to lose power, and they’re going to be pissed. If you compromise the organization so they can maintain their power, you’ve redistributed power from the people doing all the work to the executives — and that’s a catastrophe.”

On defining culture, Horowitz draws from Japanese Bushido:

“A culture is not a set of ideas, it’s a set of actions.”

He makes this concrete through a16z’s behavioral standards: people who were late to meetings with entrepreneurs were once fined $10 per minute; when declining to invest, you must explain your reasons clearly, and the declined entrepreneur is surveyed afterward; if you try to make yourself look good by making an entrepreneur look bad on social media, you’re fired. “We’re dream builders. We’re not dream killers.”

A Policing Experiment That Cut Crime by 50%

Near the end of the interview, Horowitz shares an unexpected project: his personally funded Las Vegas policing technology initiative.

The Las Vegas Police Department has two unique characteristics. First, it’s led by an elected sheriff who doesn’t report to the mayor, so it was never caught up in the “defund the police” movement and was one of the few cities that didn’t cut its police budget. Second, it never militarized, maintaining a community policing model. The result: a murder clearance rate of 94%, far exceeding San Francisco’s 75%, Chicago’s 30-something percent, and the national average of below 60%. Sheriff Kevin McMahill explained: “When somebody is murdered, there’s always somebody who knows who did it. They just don’t talk to the police. But they talk to us because we’re part of the community.”

Building on this foundation, Horowitz introduced technology: drones deployed within 90 seconds of a 911 call or gunshot detection, with video feeds pushed in real time to every officer’s phone in the vicinity; AI cameras that precisely identify vehicles involved in incidents, replacing reliance on witness descriptions.

He highlighted a particularly overlooked detail: roughly half of police use-of-force incidents stem from inaccurate descriptions. Someone steals a car with a baby in the backseat. A witness says “blue 2004 Hyundai” — it’s actually a “green 2008 Hyundai.” Officers pull over someone in an actual blue 2004 Hyundai; the driver has a gun and prior bad experiences with police; a violent confrontation erupts. AI cameras eliminate this source of error.

Horowitz reports that since the program launched, crime has dropped over 50% and police shootings of suspects have fallen nearly 75%. If accurate, these numbers make a powerful case for the “technology over policy” thesis — though independent verification of the causal relationship is warranted.

Editorial Analysis

Guest’s Position

Ben Horowitz’s identity shapes the direction of his views. As co-founder of a VC managing over $35 billion, he naturally favors more open entrepreneurial and investment environments with less regulation. In 2024, he publicly supported Trump’s technology policies, which provides important context for his criticism of government regulation. a16z holds major investment positions in both crypto and AI, meaning his optimism about these sectors is closely aligned with institutional interests.

This doesn’t invalidate his views, but readers should be aware of these alignments.

Selectivity in Argumentation

Survivorship bias in the crypto analogy: Horowitz uses “all you needed was a nickel to buy Bitcoin” to argue for equality of opportunity in the AI era. But in the crypto market, the windfall gains of a few early participants coexist with losses sustained by many latecomers. Using winners’ stories to argue for systemic equality of opportunity is logically insufficient.

The time-lag problem in the unemployment rebuttal: “Why hasn’t job destruction happened from 2012 to now?” is a powerful rhetorical question, but it overlooks two facts — first, mass technological displacement has historically involved lag periods; second, AI’s replacement of cognitive work differs fundamentally from agricultural and manufacturing automation. Agricultural automation took over a century; AI’s displacement of white-collar work could manifest within a decade.

Selective case studies for technology over policy: He contrasts defund the police (policy failure) with Las Vegas tech policing (technology success), but doesn’t discuss cases of technology failure (such as Palantir controversies or surveillance technology abuse risks), nor does he mention effective policy interventions.

Counterpoints

- AI safety researchers (such as Yoshua Bengio and Stuart Russell) argue that precisely because AI capabilities are advancing so rapidly, proactive regulation is not only necessary but urgent. “Develop first, regulate later” was not a good strategy for nuclear weapons.

- Labor economists’ research shows that while technology creates more jobs in the long run, transition-period pain concentrates on specific groups and regions, and market self-adjustment often isn’t fast enough.

- “The laws of startup physics have changed” is itself a new phenomenon — whether Horowitz’s conclusions from it will stand the test of time remains to be seen. Whether Elon Musk’s xAI has truly “caught up” still requires market validation.

Claims to Verify

| Claim | Status |

|---|---|

| Cursor revenue exceeded $1 billion | Needs verification — early 2025 public data showed ~$300M ARR |

| Biden executive order required federal approval for GPU sales | Likely simplified — the 2023 executive order involved compute reporting requirements, but “approval required for sales” is debatable |

| Las Vegas murder clearance rate of 94% | Needs independent source verification |

| Crime down over 50%, police shootings of suspects down nearly 75% | Needs verification of baseline data and causal relationship |

| Venezuela was the fourth richest country in the world | Partially correct — ranked high in per capita GDP in the 1950s, but specific ranking varies by metric |

| AI researcher compensation reaching $100 million or even $1 billion | Exaggerated — top compensation packages can reach tens of millions, but “$1 billion” lacks reliable sourcing |

Key Takeaways

- For entrepreneurs: In the AI era, moats are no longer “small team execution” — they’re a combination of data, distribution, and speed. Windows are shorter, but addressable markets are larger.

- For investors: Past valuation frameworks may need rewriting. When market size jumps from $50 billion to $5 trillion, even with intensified competition, the scale of winners is entirely different.

- For managers: Culture must be defined through specific behaviors, not value statements. The measure isn’t “what we believe” but “what we do.”

- For everyone: AI is indeed an equalizer of opportunity, but only if you actively use it. Democratization of technology doesn’t automatically translate to democratization of outcomes.

This article is based on the Invest Like The Best interview transcript. The show is hosted by Patrick O’Shaughnessy.

If you found this helpful, consider buying me a coffee to support more content like this.

Buy me a coffee