Anthropic CEO Dario Amodei: AI Is 'Magic,' But What's the Cost

Guest: Dario Amodei, CEO of Anthropic, former VP of Research at OpenAI Source: Big Technology Podcast | Duration: 01:08:50 Full Transcript: Complete transcript with speaker identification

From His Father’s Hospital Bed to the AI Lab

Dario Amodei grew up in San Francisco but had nothing to do with Silicon Valley’s startup culture. Recalling his teenage years, he said the rise of tech companies happened all around him, but he was completely uninterested — “Writing a website held no interest for me. I wanted to be a scientist. I was fascinated by physics and math.”

He studied physics first, then shifted to biology. The catalyst for this transition was deeply personal: his father suffered from a long-term illness and passed away in 2006.

“About three or four years after he died, the cure rate for that disease went from about 50% to roughly 95%.”

A few years’ difference — the distance between life and death. Dario said this fact gave him a visceral understanding of what “acceleration” means. After years of work in biology, he realized that the complexity of human disease exceeded what individual researchers could handle — “You need hundreds or thousands of researchers, and they often struggle to collaborate effectively.” AI was the only technology he could think of that might bridge this gap.

This personal history erupted at the very opening of the interview. When host Alex Kantrowitz brought up NVIDIA CEO Jensen Huang’s criticism — “Dario thinks he’s the only one who can build AI safely and therefore wants to control the entire industry” — Dario’s reaction was immediate: “That’s the most outrageous lie I’ve ever heard.”

He followed up with the line that set the tone for the entire conversation: “My father died because of cures that could have happened a few years later. Don’t call me a doomer.”

Dario’s Shortest Timeline

Among the major leaders in the AI industry, Dario may be the most aggressive on timelines. He acknowledges this but adds an important qualification: “I am indeed one of the most bullish about AI capabilities improving very fast, but I don’t use terms like AGI or ASI — those words are just designed to activate people’s dopamine.”

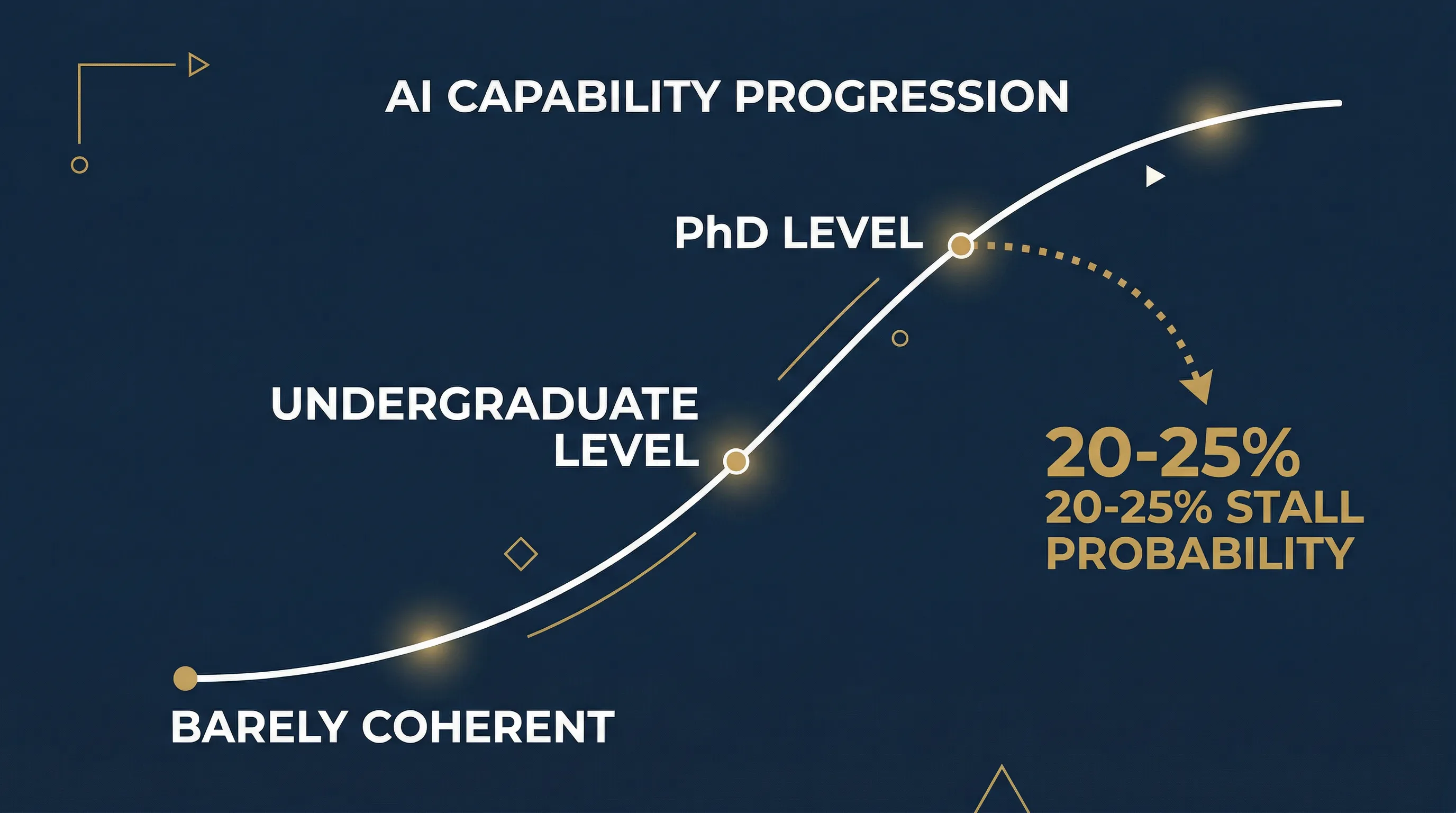

This restraint in terminology combined with radicalism in substance creates an interesting tension. The evolution curve he describes: a few years ago, AI models could “barely produce coherent sentences”; now they’ve reached “smart college student or even PhD level.”

But he also preserves uncertainty. When Alex pressed for specific timelines, Dario said: “The underlying technology is more predictable than societal adoption, but uncertainty remains. I think there’s maybe a 20 to 25% chance that sometime in the next two years the models just stop getting better for reasons we don’t understand, or maybe reasons we do.”

“The exponential that we’re on could totally peter out.”

This statement is worth noting. A CEO who has raised nearly $20 billion, betting everything on continued AI progress, publicly acknowledging a one-in-four chance that it could all stall. This is either rare honesty or carefully calculated risk hedging. More likely, it’s both — acknowledging risk is itself a form of credibility building.

Are Scaling Laws Still Working?

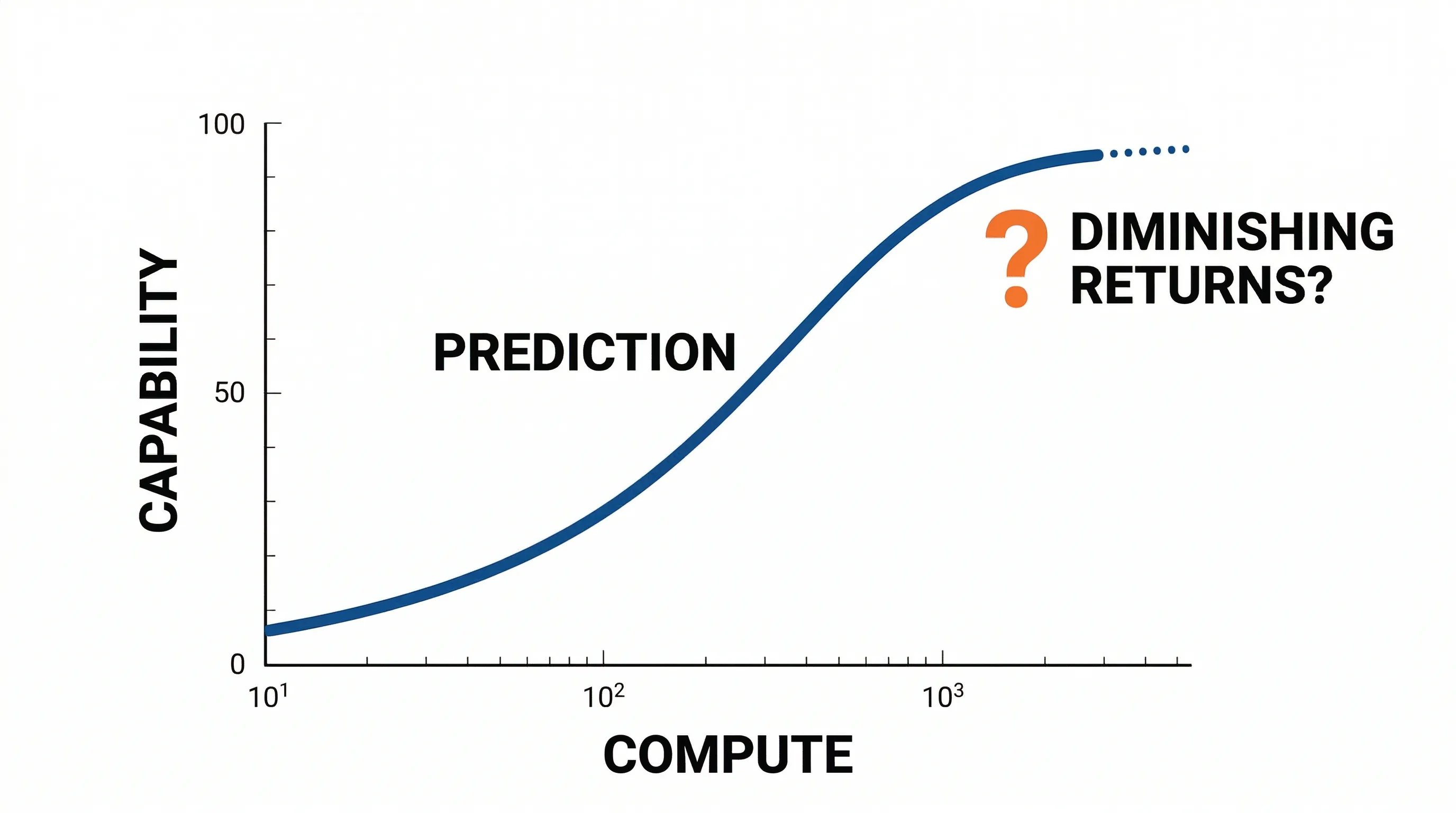

The industry-wide debate over whether scaling laws are hitting diminishing returns was the most technically substantive part of the interview.

Scaling laws refer to a set of empirical regularities: as you increase training data, model parameter count, and compute, AI model performance improves in predictable ways. Around 2020, OpenAI (where Dario served as VP of Research at the time) published a series of papers quantifying these laws, finding power-law relationships between model performance and compute — meaning that as long as you keep investing more, models keep getting better. This is the theoretical foundation for the massive fundraising by Anthropic, OpenAI, and others: more money = more compute = better models.

But by late 2025, dissenting voices began emerging within the industry. Researchers at multiple institutions noticed that on certain benchmarks, simply scaling up model size was yielding diminishing improvements. This was the context behind Alex’s questioning.

Alex pressed directly: “So many folks in the AI industry are talking about diminishing returns from scaling. That doesn’t fit with the vision you just laid out. Are they wrong?”

Dario’s response focused on a single domain: “I can only speak based on Anthropic’s models. If you look at coding, coding has kept getting better.” This answer was precise — he scoped it with “I can only speak for Anthropic’s situation,” then used his strongest evidence to respond. But he didn’t address whether other domains — such as reasoning, math, or commonsense understanding — showed the same trend.

When Alex cited DeepMind CEO Demis Hassabis’s view — “achieving human-level intelligence may require several new techniques” — Dario’s response was brief: “We’re developing new techniques every day.” But he didn’t specify what those techniques were, nor did he address Hassabis’s implied point — that scaling alone might not be enough.

He showed candor in another direction. Alex brought up a known limitation of large language models — the continual learning problem, where models can’t learn and update from ongoing experience the way humans do. Dario didn’t deny the problem existed; instead, he reframed its importance: “Even if we never solved continual learning, the potential for LLMs to affect things at the scale of the economy would still be very high.”

He drew on his academic background to support this point. Having worked for years in biology, he argued that even if AI couldn’t learn continuously like humans, its scale advantage in processing complex problems — simultaneously “digesting” massive volumes of papers and data — already far exceeds the collaborative capacity of human teams.

The logic of this argument: even with limitations, the current technical trajectory is already valuable enough. This is more persuasive than claiming “everything is getting better,” but also neatly sidesteps the core question — are scaling laws accelerating, plateauing, or turning downward? For a company that has raised $20 billion betting on “keep scaling,” the answer to this question is worth a fortune.

Is $20 Billion Enough?

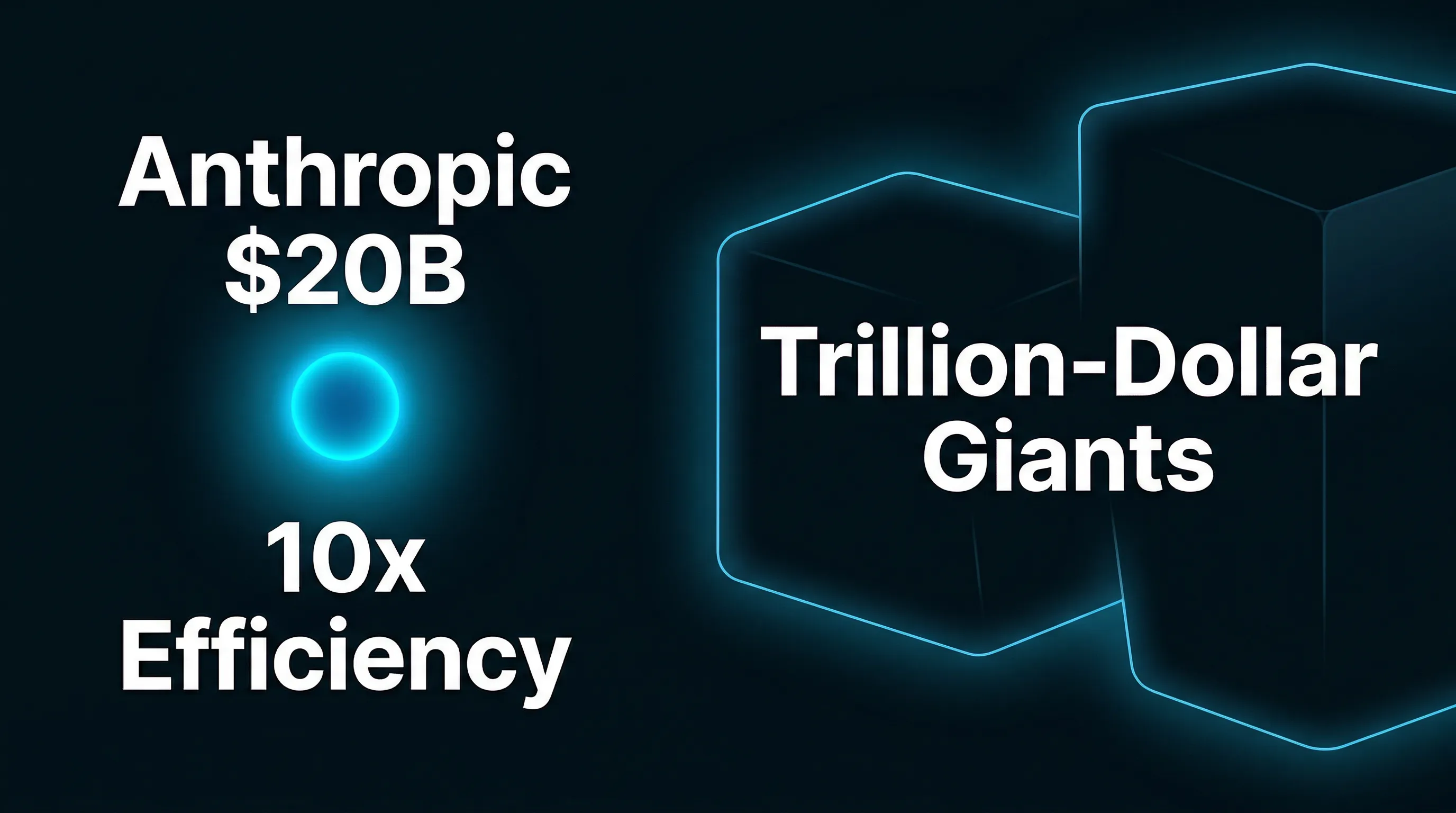

Anthropic faces an obvious structural problem: it’s not Google, not Microsoft, not Meta. These trillion-dollar companies can invest $100 billion in data centers without breaking a sweat. Even with nearly $20 billion raised, Anthropic is at a disadvantage in absolute capital.

Alex put the issue on the table: “Maybe Anthropic is a company with the right idea but the wrong resources.”

Dario’s response was his most polished business argument of the entire conversation:

“If we can do for $100 million what others can do for a billion, and for $10 billion what they can do for $100 billion, then it’s ten times more capital-efficient to invest in us. Would you rather have 10x efficiency, or a large initial capital pool?”

He elaborated that three years ago, Anthropic had only raised “a few hundred million,” while OpenAI had already secured $13 billion from Microsoft. But Anthropic’s growth validated the thesis — “We’re the fastest-growing software company in history at our scale.”

On Meta CEO Mark Zuckerberg’s aggressive talent poaching through premium compensation, Dario’s assessment was pointed: “Zuckerberg is trying to buy something that cannot be bought — alignment with the mission.” He added that very few Anthropic employees had been poached — “not because no one tried; I’ve spoken with many colleagues who received offers.”

He concluded: “I am pretty bearish on what they’re trying to do.” This may be the most direct attack on a competitor in the entire interview.

Why Anthropic Bets on Enterprise Over Consumers

CNBC, citing internal documents, reported that 60% to 75% of Anthropic’s revenue comes from API. Dario didn’t confirm the exact figures but acknowledged “the majority does come through the API,” while emphasizing that their apps business, Max subscriptions, and Claude Code are also growing rapidly.

More interesting was his explanation for this strategic choice. OpenAI bets on consumers (ChatGPT), Google bets on integrating into existing products (Gmail, Calendar), while Anthropic bets on enterprise applications.

Dario used a thought experiment to illustrate why:

“Suppose I have a model whose biochemistry knowledge improved from undergraduate to PhD level. If I tell a consumer — ‘good news, the model went from undergrad to PhD level in biochemistry’ — maybe 1% of consumers care. But if I sell it to a pharmaceutical company, their entire business model could change.”

This explains why Anthropic places such importance on coding as a core use case. Dario said coding was “the most obviously valuable” of all use cases, with a self-reinforcing loop — “Getting better at coding with the models actually helps you develop the next model.”

Why Developers Are Complaining

This was one of the most contentious business topics in the interview. Alex pointed to a phenomenon: developers using Anthropic’s newer models in tools like Cursor are seeing noticeably higher costs than before.

Dario acknowledged that Anthropic’s pricing has a puzzling misalignment: a consumer paying $200/month in subscription fees can access the equivalent of $6,000/month in API calls. This means the consumer side is heavily subsidized, while API developers bear higher marginal costs.

His explanation was that inference optimization is still in its early stages: “We make improvements all the time that make models 50% more efficient. We’re just at the beginning of optimizing inference.”

When Alex pressed on the path to profitability — “I think the loss is going to be like $3 billion this year” — Dario didn’t give a direct answer. Instead, he constructed an interesting thought framework to explain the persistent losses:

“Imagine in 2023, you train a model that costs $100 million. In 2024, you deploy it and make $200 million, but spend $1 billion training the next model. In 2025, the billion-dollar model makes $2 billion, but you spend $10 billion on the next. Every year the company looks unprofitable, but each generation of model is actually profitable.”

This framework essentially argues that AI companies’ losses aren’t the traditional “spending more than you earn” — they’re forward-looking investments. Each generation of model earns more than its training cost; it’s just that the next generation costs even more. In Dario’s narrative, this is closer to Amazon’s early logic of “no dividends, reinvest everything into growth.”

But this argument has a critical circular dependency: it presumes scaling laws continue to work — that each more expensive model generation actually generates more revenue. And Dario himself admits there’s a 20-25% probability scaling laws could stall. If models stop improving, this “lose now, recoup later” cycle breaks: you spend $10 billion training a model that’s barely better than the $1 billion one, but the market has already priced in the $1 billion model’s capabilities.

He also mentioned that inference costs are continuously declining — “Inference has improved a huge amount from where it was a couple years ago.” This is a more solid argument. Even if model capabilities plateau, inference efficiency improvements can continue to boost margins. But he didn’t separate these two arguments (model improvement vs. inference optimization); he blended them together.

“Open Source Is a Red Herring”

Among all his arguments, Dario’s stance on open-source AI may be the most vulnerable to challenge.

He said: “I think open source is actually a red herring.” His argument: open-source models aren’t free — you have to run inference on the cloud, and someone has to make inference fast enough. “When I think about competition, I think about which models perform better on the tasks we do, not open vs. closed.”

He also mentioned that Anthropic is offering model fine-tuning, interpretability interfaces, and activation steering, suggesting that closed-source models can deliver added value that open source cannot.

But this argument has obvious gaps. Meta’s Llama series has demonstrated that open-source models can approach frontier performance on many tasks, while the broader open-source ecosystem — including inference optimization tools like vLLM and llama.cpp — is rapidly lowering deployment costs. Reducing open source to “someone needs to run inference” ignores the systemic advantages of the open-source model in flexibility, auditability, and cost control.

Dario’s dismissal of open source is directly connected to Anthropic’s business model: if open source is good enough, API companies lose their reason to exist. It’s worth noting that Anthropic hasn’t completely rejected the concept of “openness” — they’ve published extensive safety research papers and made their interpretability research methods public. But on model weights, the most core commercial asset, they hold firmly to the closed-source line.

This position also mutually reinforces the safety narrative: closed source can be framed as “responsibly controlling model access” rather than mere commercial protection. This tight alignment between safety and business interests is both the source of Anthropic’s narrative power and its greatest credibility challenge.

“Race to the Top” or Self-Promotion?

The final 20 minutes of the interview carried the highest tension. Dario laid out his frequently referenced “Race to the Top” concept:

“With a Race to the Bottom, everyone competes to get things out as fast as possible. It doesn’t matter who wins — everyone loses. Because you make unsafe systems. The Race to the Top is the opposite — it doesn’t matter who wins, everyone wins. Because you set a standard for how the field should work.”

He cited Anthropic’s pioneering release of “Responsible Scaling Policies” (RSP) as a concrete example of “setting standards.” He believes such voluntary commitments encourage other companies to follow, creating a positive cycle.

Alex then raised the topic of SBF (Sam Bankman-Fried) — the FTX founder who once tried to invest in Anthropic. Dario revealed he met SBF about four or five times, with the impression of “a move fast and break things kind of person” who was interested in AI and also in AI safety. “But in retrospect, the ‘move fast and break things’ was way more extreme and way worse than I would have imagined.” He mentioned that SBF was ultimately given only non-voting shares — implying that even when SBF was at the height of his influence, Dario maintained caution and distance. This detail is noteworthy because it suggests Dario’s distrust of Silicon Valley’s “move fast” culture, and this distrust is the emotional foundation of Anthropic’s safety narrative.

Alex pressed on a detail from before the interview: he had mentioned Jensen Huang’s criticism beforehand, and Dario had become “very animated.” On camera, Dario reiterated that it was “an outrageous lie” and used “Race to the Top” to explain his true intention — not to monopolize the industry, but to raise safety standards for all participants.

But the real climax of the interview came with the final question. Alex said: “You say AI is a dangerous technology, and you also say you’re obsessed with having impact. I’m curious — is your desire for impact driving you to accelerate a technology you yourself call dangerous?”

This question strikes at the deepest contradiction in Dario’s public image: a man who claims to worry about AI risk more than anyone, while simultaneously pouring tens of billions of dollars into building more powerful AI systems.

Dario’s answer was lengthy. He listed his contributions to the safety field — interpretability research, responsible scaling policies, publishing safety research. He said: “I’ve warned more about AI’s dangers than anyone in the industry, and as models get more powerful, I’m getting louder about it.” He expressed that he is “worried that AI risks are getting closer.”

But he didn’t directly answer Alex’s core question — if you truly believe risk is approaching, why are you accelerating? His subtext seemed to be: precisely because risk is approaching, a responsible player (i.e., Anthropic) needs to be at the frontier, rather than ceding that position to competitors who care less about safety.

Whether this logic holds depends on whether you believe a single company can simultaneously maximize competitiveness and maximize safety — and historically, very few companies have managed to do both.

Editor’s Analysis

Guest’s Position

Dario Amodei is the CEO and co-founder of Anthropic. Each of his arguments corresponds to an Anthropic business interest:

- Scaling laws still work → Anthropic needs continued massive fundraising to train larger models

- Talent density > capital scale → Anthropic’s core narrative against trillion-dollar giants

- Open source is a red herring → Anthropic’s API business model requires closed-source model superiority

- Safety as differentiation → “Race to the Top” distinguishes Anthropic from OpenAI’s and Meta’s “move fast” approach

This doesn’t mean his arguments are necessarily wrong, but readers should be aware that the correctness of these arguments directly determines whether the tens of billions of dollars in funding he’s secured is justified.

Selectivity in Argumentation

Using only coding to evidence scaling laws: When pressed on diminishing returns, Dario cited only coding improvements. He didn’t address benchmark trends in reasoning, math, commonsense understanding, or other domains, nor did he respond to multiple research institutions reporting cross-domain slowdowns.

Vague profitability timeline: Using the “each model generation is more profitable than the last” framework to explain persistent losses, without providing a specific break-even timeline. This framework’s premise is that scaling laws continue to hold — yet he himself admits a 20-25% probability they won’t.

Oversimplification of open source: Reducing the competitive threat of open-source AI to “inference costs money,” ignoring the fact that Llama 3, Mistral, and other open-source models are rapidly closing the gap with closed-source frontier models, and that enterprise customers may prefer auditable, customizable open-source solutions.

Counterpoints

Economists’ skepticism: Multiple economists have pointed out that predictions of “AI will bring massive economic transformation” have a poor track record historically. Daron Acemoglu (MIT) and others argue that AI’s actual GDP impact may be far below industry expectations.

Safety researchers’ concerns: Some AI safety researchers argue that Anthropic’s “Race to the Top” narrative is essentially still participating in an arms race, just with safety rhetoric added. In practice, releasing more powerful models — even with safety measures — still drives overall risk upward.

Open-source advocates’ rebuttal: Meta AI chief Yann LeCun has repeatedly argued publicly against closed-source AI, contending that only open source can truly achieve AI safety and democratization, and that closed development actually increases the risk of monopoly by a few companies.

Fact Check

| Claim | Verification |

|---|---|

| Anthropic has raised nearly $20 billion | Largely accurate — public reporting confirms multi-round fundraising totals in this range |

| OpenAI received $13 billion from Microsoft | Accurate — consistent with public data at the time of the interview |

| 60-75% of revenue from API (CNBC report) | Not denied — Dario acknowledged “the majority comes through the API” but didn’t confirm specific percentages |

| “Fastest-growing software company in history at this scale” | Unverified — self-reported claim, no specific revenue figures provided |

| Father’s disease cure rate rose from 50% to 95% within 3-4 years of his death | Plausible but not independently verified — consistent with medical progress timelines for certain blood cancers |

Key Takeaways

- Dario Amodei is one of the most optimistic AI industry leaders on capability improvement, yet he publicly acknowledges a 20-25% probability that models could stop progressing within two years

- Anthropic’s core competitive narrative is “10x capital efficiency” — building better models with less money, which depends on sustained talent density advantage

- He considers open-source AI a “red herring,” arguing that enterprise-grade APIs are the primary form of AI commercialization — a view perfectly aligned with Anthropic’s business model

- The experience of his father dying from a disease that became curable just years later is used by Dario to establish moral legitimacy for accelerating AI research, and remains his most powerful emotional argument

- The “Race to the Top” framework attempts to position safety as a competitive advantage rather than a hindrance, but he failed to directly address the tension between his desire for impact and the acceleration of risk

Compiled from Anthropic CEO Dario Amodei: AI’s Potential, OpenAI Rivalry, GenAI Business, Doomerism, 2026-03-02

If you found this helpful, consider buying me a coffee to support more content like this.

Buy me a coffee