[Interview] Dario Amodei: We Are Near the End of the Exponential

Guest: Dario Amodei, Co-founder & CEO of Anthropic, Former VP of Research at OpenAI, led training of GPT-2 and GPT-3 Host: Dwarkesh Patel | Duration: 2 hours 22 minutes Source: YouTube | Dwarkesh Podcast

Key highlights:

- Scaling laws still hold — pre-training and reinforcement learning follow the same pattern

- 50% probability of achieving “country of geniuses” within 1-3 years, 90% within 10 years

- The AI industry will consolidate into 3-4 oligopolies; one year off is the line between bankruptcy and profitability

- The federal government should set regulatory baselines; authoritarianism may be rendered obsolete by technology, just as feudalism was

Table of Contents

- The End of the Exponential Is in Sight

- A Country of Geniuses in the Data Center, Within 1-3 Years

- AI Diffusion: Extremely Fast but Not Instantaneous

- The Trillion-Dollar Gamble: Compute Investment and Bankruptcy Risk

- When AI Can Do Everything, Will the Economy Be Commoditized?

- The Regulatory Dilemma: State Patchwork or Federal Standards

- Reshaping Global Order in the AI Era

- 40% of My Time Goes to Culture

The End of the Exponential Is in Sight

Dario Amodei opens the interview by acknowledging that the exponential growth of the past three years has largely matched expectations. Models have evolved from smart high school students to college students, then to PhDs and professionals. In programming, they’ve even surpassed that level. But what surprises him most is the public’s severe lack of awareness about the fact that we’re “approaching the end of the exponential.”

His core hypothesis dates back to a document he wrote in 2017 — the Big Blob of Compute hypothesis. This wasn’t specifically about language models but a more general framework. At the time GPT-1 had just been released, and multiple research directions coexisted: AlphaGo, OpenAI’s Dota, DeepMind’s StarCraft AlphaStar, and more.

“All the cleverness, all the techniques, all the ‘we need new methods’ stuff — it doesn’t really matter that much. What really matters are just a few things.”

Dario identified seven key elements: raw compute, data quantity, data quality and distribution, training duration, infinitely scalable objective functions (for both pre-training and RL), and normalization/conditioning for numerical stability. This hypothesis still holds today. Pre-training scaling laws continue to work, and reinforcement learning now exhibits the same phenomenon.

On the puzzle of sample efficiency, Dario offers a unique perspective: pre-training sits between human evolution and instant learning. The human brain isn’t a blank slate — from the start it has regions connected to inputs and outputs, with priors from evolution. Language models start from random weights, more like a blank slate. Pre-training and RL exist in a middle space between evolution and instant learning, while in-context learning sits between long-term and short-term learning.

This forms a hierarchy: evolution, long-term learning, short-term learning, instant reaction. The various phases of LLMs fall along this spectrum, but not at exactly the same points. Generalization is the key — just as pre-training only began to generalize when it moved from narrow fan fiction corpora to general web crawls, RL follows the same path. From simple math competitions to broader coding tasks to more diverse domains, generalization is progressively emerging.

A Country of Geniuses in the Data Center, Within 1-3 Years

When asked “why one year from now and not ten years,” Dario was explicit about his confidence levels. For achieving a country of geniuses in a data center within ten years, he puts his confidence at about 90%. It’s hard to go higher than 90% because of irreducible uncertainty — company turmoil, a missile hitting Taiwan’s fabs.

“For programming, other than that irreducible uncertainty, in ten years we will absolutely be able to do end-to-end programming.”

But he added a crucial distinction: verifiable tasks vs. non-verifiable tasks. For verifiable tasks like programming, he thinks we’ll get there in a year or two. The only uncertainty lies in tasks that are hard to verify — planning Mars missions, fundamental scientific discoveries like CRISPR, writing novels. These tasks have verification difficulties, with a small amount of fundamental uncertainty even on long timescales.

For the more aggressive timeline, Dario says it’s more like a 50-50 thing — possibly achievable in one to three years. He predicts that by late 2026 or early 2027, there will be AI systems that can navigate human interfaces for digital work, match or exceed Nobel laureate intellectual capability, and interact with the physical world.

But there’s a time gap between technological progress and economic diffusion. Even if models reach genius-level within a year or two, when trillions in revenue will actually materialize remains uncertain — one year, two years, possibly stretching to five. Curing all diseases requires going through biological discovery, manufacturing new drugs, and regulatory processes. Even if AI invents cures for everything, lab-to-global-deployment could take years.

This uncertainty shapes Anthropic’s compute procurement strategy. Assume revenue grows 10x per year, reaching $100 billion by late 2026 and $1 trillion by late 2027. But if you purchase compute matching $1 trillion in revenue and the growth rate is off by even one year, or 5x instead of 10x, the company goes bankrupt. So the actual decision is to support hundreds of billions rather than trillions, balancing upside capture against financial risk.

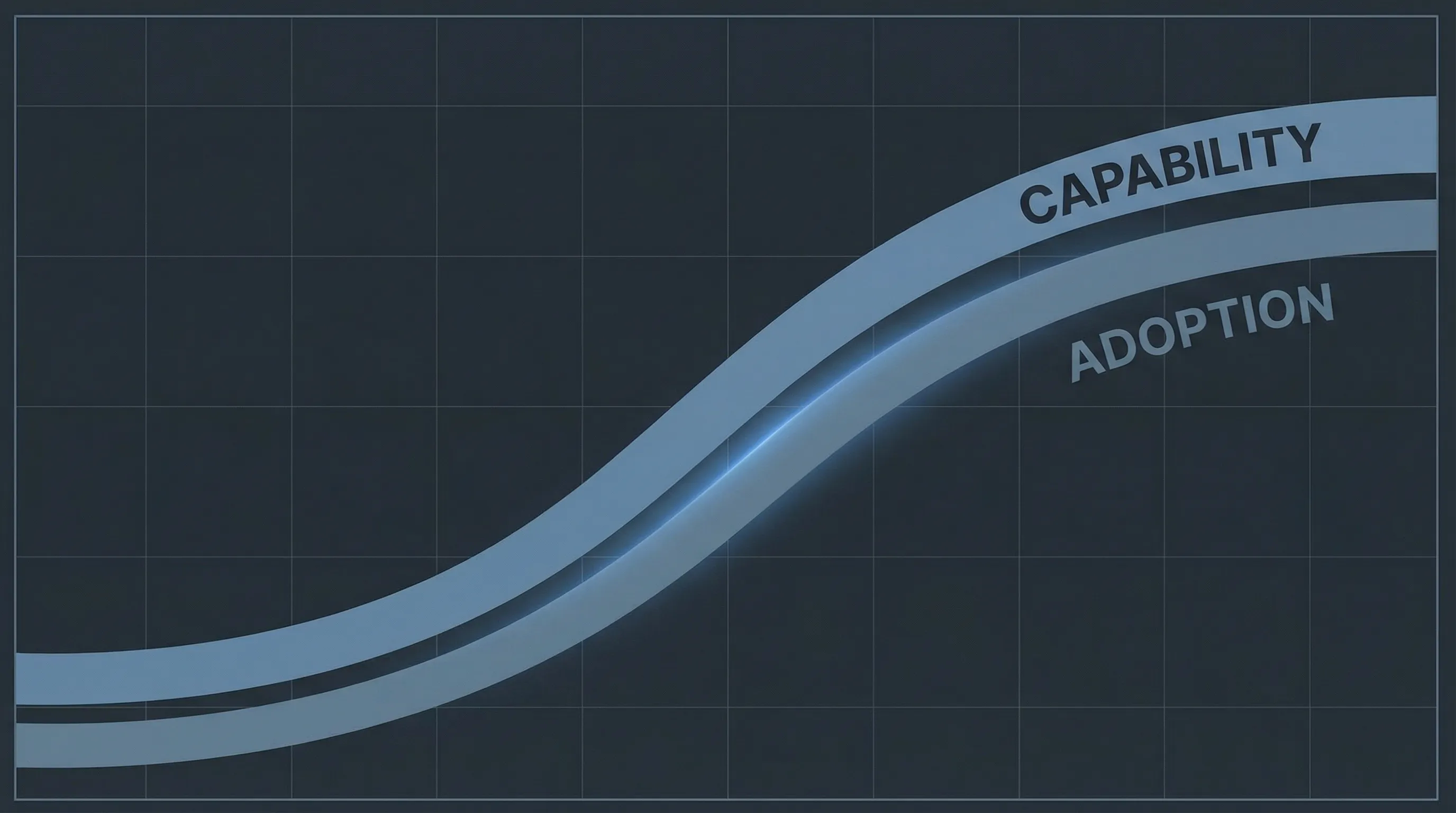

AI Diffusion: Extremely Fast but Not Instantaneous

Anthropic’s revenue achieved 10x growth from last year to this January, adding billions of dollars in a single month. Dario acknowledges this curve can’t continue forever, but the current pace forces a rethinking of technology diffusion timescales.

“We should think about this in-between world where things are really, really fast but they’re not instantaneous. Diffusion is very real, and it’s not entirely about AI model limitations.”

Fast but not instant — this is the key to understanding current AI deployment. Enterprise adoption requires change management, security reconfiguration, legacy system overhauls. Dario observes two simultaneous exponentials: the growth of model capabilities, and the growth of economic diffusion.

The critical bottleneck for white-collar automation is computer use capability. Take video editing: AI needs to control the screen, understand editing software, and judge shot selection. The OS World benchmark shows model performance has improved from 5% to 70%. Once this threshold is breached, automation of most white-collar work becomes feasible.

Programming has already shown productivity leap signals. Some engineers at Anthropic no longer write code by hand at all. But Dwarkesh pushes back: why haven’t we seen a software renaissance at the macro level? Why do independent assessments show a 20% productivity decline?

Dario argues that long context windows already provide sufficient alternative capability. Claude’s 1-million-token window can accommodate the core of an entire codebase, which may be enough to achieve the “country of geniuses in a data center.”

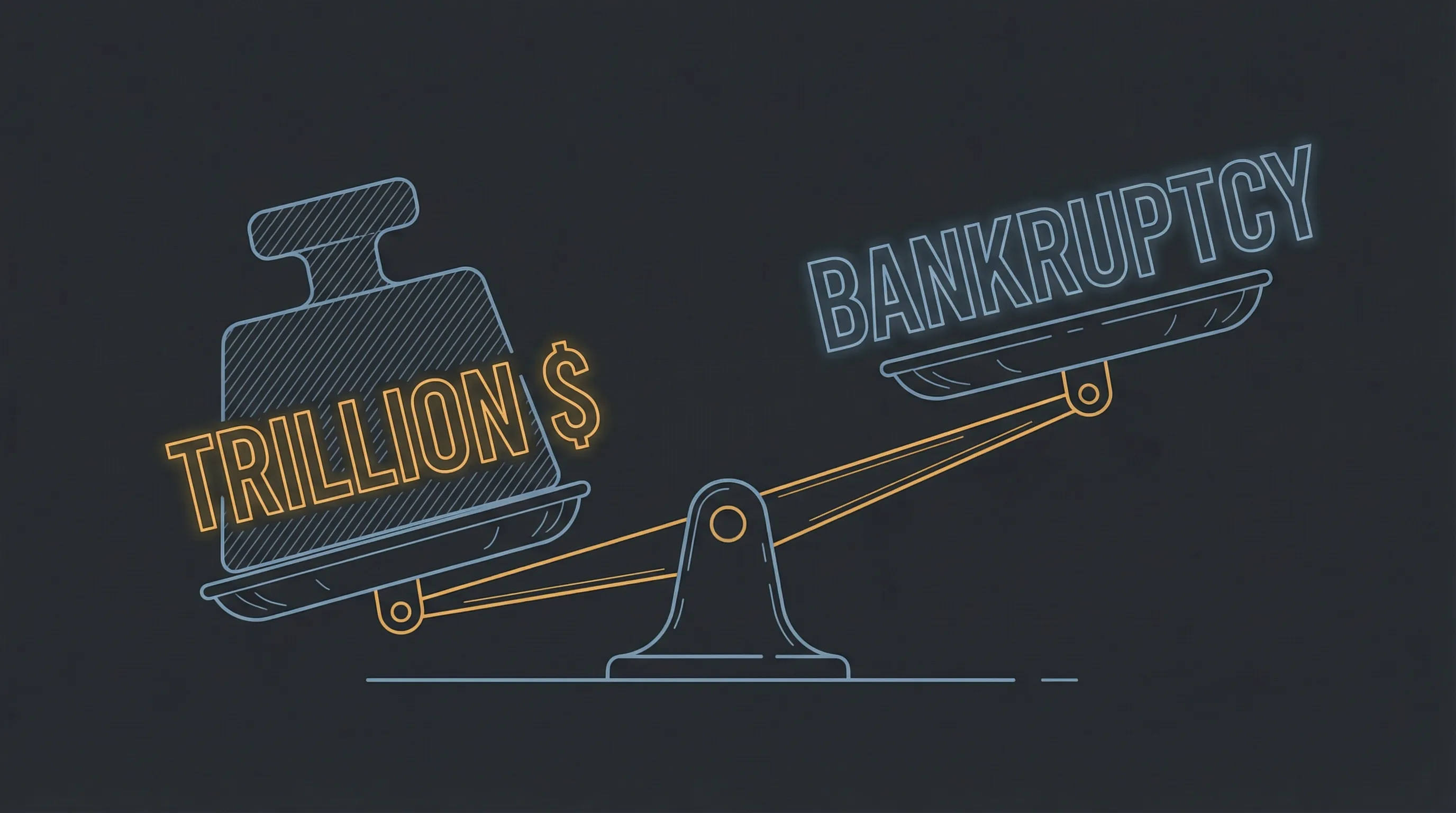

The Trillion-Dollar Gamble: Compute Investment and Bankruptcy Risk

Dwarkesh poses a sharp challenge: if you really have a “country of geniuses in a data center,” why not buy $5 trillion worth of compute to run it?

Dario’s answer reveals the industry’s biggest risk: “Buy $1 trillion in annual compute, and if the timeline prediction is off by even one year, it leads to bankruptcy.” Anthropic’s profitability plan is anchored to 2028 — going all-in too early means sunk costs, too late means missing the window.

The industry’s compute trajectory confirms this. Global AI compute in 2026 is approximately 10-15 GW, projected to reach 300 GW by 2029, with trillions in investment.

AI profitability logic differs fundamentally from traditional business. Ideally, half of compute goes to training and half to inference, with gross margins above 50%. Dario offers a simplified model: $100 billion in annual compute costs, $50 billion supporting $150 billion in revenue, $50 billion training new models, $50 billion in profit.

“Profit happens when you underestimate the amount of demand. Losses happen when you overestimate it, because you’re buying data centers in advance.”

This reveals the core paradox: the real determinant of profit and loss isn’t technological progress but accuracy of demand forecasting. The top three companies currently have positive gross margins but lose money overall, because they’re still in the compute expansion phase — each model is individually profitable, but training the next generation causes overall losses.

Once “genius data center” scale is reached, the industry will form an oligopoly of 3-4 companies. Extremely high entry costs create natural barriers, and models themselves are differentiated. Dario predicts industry revenue will reach the trillion-dollar range before 2030. One year off is the line between bankruptcy and profitability.

When AI Can Do Everything, Will the Economy Be Commoditized?

Cloud computing is a highly homogeneous market, but AI models are more differentiated than cloud. Claude, GPT, and Gemini have subtle differences in coding style and reasoning capability — it’s not simply that Claude is better at coding while GPT is better at math. Dario says models excel at different types of coding, have different styles, and are actually quite distinct from each other.

How long will this differentiation last? There’s a counterargument: if AI models can complete the entire model production process themselves, this capability could diffuse throughout the economy. But Dario argues that wouldn’t be AI model commoditization — it would be the entire economy commoditizing all at once. In a world where anyone can do anything and nothing has a moat, he doesn’t know what would happen.

“Maybe when AI models can do everything, if we solve all the safety and security issues, that’s one of the mechanisms for the economy to flatten out again.”

Dario’s concern isn’t wholesale commoditization but geographic inequality. He worries that growth rates could reach 50% in Silicon Valley and the parts of the world socially connected to it, while not being much faster than current rates elsewhere.

On robotics, Dario believes that once models have human-like learning capabilities, both robot design and control will be revolutionized. But there will be a 1-2 year diffusion delay.

On pricing models, Dario thinks the API model will persist longer than people expect. Technology growing exponentially means there are always newly developed use cases. But not every token has the same value — when a model says “restart your Mac” it might be worth a few cents, but if the model tells a pharmaceutical company “you should move that aromatic ring from that end to this end,” those tokens could be worth tens of millions of dollars.

Claude Code became the coding agent category leader for a simple reason: Anthropic has hundreds of programmers using it internally every day, creating a rapid product feedback loop.

The Regulatory Dilemma: State Patchwork or Federal Standards

On December 26, 2025, the Tennessee state legislature introduced a bill: for a person to intentionally train an artificial intelligence to provide emotional support, including through open-ended dialogue with a user, shall constitute a criminal offense.

Dario thinks that particular law is silly. But what’s more dangerous is what’s actually being voted on: a ban on all state AI regulation for ten years, with no clear plan to do federal AI regulation.

“Ban the states from doing anything for ten years, people say they have plans from the federal government, but there’s no proposal on the table. Given the timelines, ten years is an eternity.”

Dario’s preferred approach: the federal government should step in to set standards, and states can follow but not differentiate.

On specifics, Dario advocates starting with transparency standards, monitoring autonomy risks and bioterrorism risks. As risks become more serious, legislation can become more aggressive — such as mandating that people have bio-classifiers.

His fundamental view: loosen regulation around AI’s health benefits while significantly increasing safety and security legislation. Start with transparency, then move fast if risks materialize. The only way to do this is to be agile. That’s why he wrote “The Adolescence of Technology” — hoping decision-makers will act faster than they otherwise would.

Reshaping Global Order in the AI Era

Dario Amodei isn’t too worried about AI welfare distribution in developed countries. He believes market mechanisms will ensure profitable applications land. What truly concerns him is the developing world — sub-Saharan Africa, India, Latin America — regions where markets are imperfect and technology struggles to deploy even when it exists.

“I worry that even if a treatment is developed, some person in rural Mississippi won’t get it. That’s a smaller version of what we’re concerned about in the developing world.”

On chip export controls to China, Dario’s core concern is the Chinese government using AI to oppress its people. His worry scenario: if both the US and China build “countries of geniuses in data centers,” the resulting equilibrium could be more dangerous than nuclear deterrence. When both sides believe they have a 90% chance of winning, the probability of war rises dramatically.

But Dario also holds a radical hope: authoritarianism may be rendered obsolete by technology, just as feudalism was. Industrialization stripped feudalism of its economic foundation — could powerful AI make authoritarian regimes morally obsolete?

On Claude’s constitutional design, Dario clarifies two common misconceptions. First, the constitution isn’t a rule list but a principled framework. Second, Claude isn’t an autonomous agent but “mostly corrigible with principled hard limits” — it defaults to executing user tasks but refuses requests to create bioweapons or harm others.

He hopes democratic alliances will have stronger bargaining power in AI world order negotiations. Initial conditions matter — there are critical nodes on the technological exponential curve that could give certain nations significant national security advantages.

40% of My Time Goes to Culture

Dario Amodei is the kind of tech CEO who writes 50-page memos. Every two weeks he stands in front of the entire company and speaks for an hour, covering model progress, product strategy, industry dynamics, and geopolitics. The session is called DVQ (Dario Vision Quest) — not his name for it; he tried to oppose it “because it makes me sound like I’m going to go smoke peyote,” but the name stuck.

He spends 40% of his time ensuring Anthropic’s culture stays healthy. With the company at 2,500 people, direct involvement in every detail becomes impractical. But ensuring “everyone sees themselves as part of the team, working together rather than against each other” has enormous leverage.

“People aren’t trying to succeed at each other’s expense or stab each other in the back. I think that happens a lot at some other places.”

His approach is candid communication. He responds directly to internal surveys and employee concerns on Slack channels, avoiding corporate-speak and defensive communication.

When asked “what’s hardest to capture in the historical record,” Dario raises three points. First, the degree to which the outside world doesn’t understand exponential growth at each moment — “if we’re only a year or two from AGI, the average person on the street has absolutely no idea.” Second, decision speed — a critical decision might take just two minutes. Third, isolation — the people who are really betting on this happening bear a strangeness that the outside world doesn’t understand.

Editorial Analysis

Positional Bias

Dario Amodei’s background inevitably colors his judgment on AI development timelines. As Anthropic’s CEO, he leads a company competing with OpenAI and Google DeepMind for next-generation foundation models. His optimistic prediction of “country of geniuses in a data center within 1-3 years” correlates with his commercial interests — the sooner AGI arrives, the more pronounced Anthropic’s first-mover advantage as a leading lab; the more certain the exponential growth narrative, the easier it is to attract investors and top talent.

Notably, Dario repeatedly mentions “some of us, deep down, think the probability of this happening is quite high” — a phrasing that itself reveals that AI labs are not monolithic in their views.

Selective Argumentation

When discussing scaling laws, Dario primarily cites success stories — Claude 3.5 Sonnet’s breakthroughs on programming tasks, potential breakthroughs in continual learning — but devotes less attention to failures or plateaus. He mentions “some things haven’t happened as I expected,” but doesn’t specify which predictions failed. This selectivity may lead listeners to overestimate the reliability of scaling laws.

On regulation, Dario displays a nuanced balance: opposing excessive regulation while supporting strict scrutiny on biosafety; advocating for chip export controls while acknowledging “there’s too much money in the market to stop it.” This stance projects a responsible image to regulators without undermining industry development speed.

Counter-Perspectives

There is widespread academic skepticism about the persistence of scaling laws. Researchers like NYU’s Gary Marcus argue that current scaling laws primarily exploit low-hanging fruit — internet text, code repositories, image data — and these data sources are being exhausted. Once high-quality data runs out, the marginal returns of simply adding compute will decline sharply.

Economist Tyler Cowen’s “AI bubble thesis” suggests that current AI investment may suffer from severe supply-demand mismatch: hundreds of billions invested in data center construction, but actually revenue-generating applications remain limited.

On AGI timelines, MIT physicist Max Tegmark and others offer conservative estimates of 10-20 years, rather than Dario’s implied 1-3 years. They argue that between “smart postdoc” and truly general intelligence lie multiple hard-to-cross chasms — embodied intelligence in the physical world, common-sense reasoning, and causal understanding.

Facts to Verify

- “70% time savings” data source — Dario mentions Labelbox achieving 70% time savings with Claude, but provides no specific experimental design or sample size

- Accuracy of chip export control claims — Dario says “it hasn’t happened,” but the US actually implemented export controls on high-end AI chips in October 2022

- Anthropic’s actual headcount — Dario mentions the company has 2,500 people; does this figure include contractors?

Dario’s Core Judgments

- Scaling laws still hold — pre-training and reinforcement learning follow the same pattern

- 50% probability of achieving “country of geniuses” within 1-3 years, 90% within 10 years

- Technological progress is extremely fast but economic diffusion has a 1-5 year delay

- The AI industry will consolidate into 3-4 oligopolies, reaching profitability around 2028

- The federal government should set regulatory baselines, starting with transparency and adding targeted legislation as needed

- Democratic alliances need to secure advantages in the global AI competition

Source: Dwarkesh Podcast — Dario Amodei | Transcription date: 2026-02-14

If you found this helpful, consider buying me a coffee to support more content like this.

Buy me a coffee