Financializing Superintelligence: The AI Power Shift Behind Amazon's $50B OpenAI Bet

Episode: Peter H. Diamandis Podcast #235 Hosts: Peter Diamandis, Salim Ismail, and co-hosts Duration: 02:16:26 Source: YouTube Full transcript: Speaker-identified full transcript

Introduction

Peter Diamandis is the founder of XPRIZE Foundation and Abundance 360. He co-hosts an AI news roundtable with Salim Ismail, former executive director of Singularity University — twice weekly, self-described as “a news briefing for the age of technological acceleration.” Episode 235 was recorded in early March 2026, runs 2 hours and 16 minutes, and covers 49 topics.

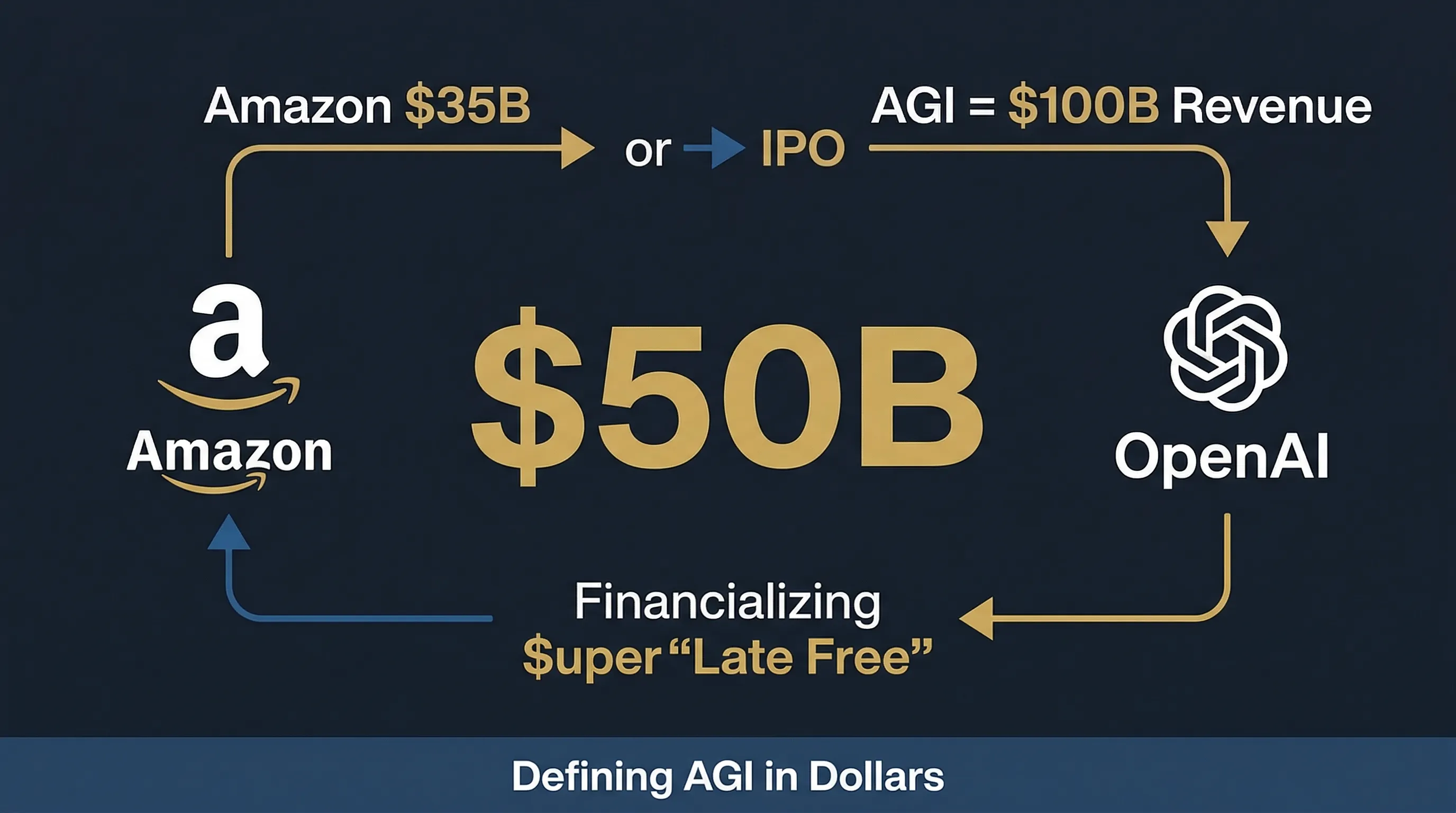

The episode’s title, “Financializing Super Intelligence,” is not rhetorical. It refers to something that actually happened: Amazon’s proposed $35B investment in OpenAI is contingent on two triggers — OpenAI completing an IPO, and achieving AGI. The definition of AGI, in turn, comes from a private agreement between OpenAI and Microsoft: generating $100B in revenue or profit.

Superintelligence is now a financial milestone that can be written into an investment contract.

This analysis distills 6 core themes from those 49 topics, and independently examines the hosts’ argumentative framework and its logical gaps.

I. We’ve Already Defined AGI in Dollars

The opening news item — and the source of the episode’s title — is Amazon’s conditional $35B investment in OpenAI, contingent on OpenAI completing an IPO and achieving AGI. Combined with roughly $15B in earlier investments, the total approaches $50B. The hosts call this the “$50B Late Fee”: Amazon had gone all-in on Anthropic; this is its delayed hedge into OpenAI.

Diamandis himself articulates the most precise description of what’s happened:

“It’s kind of incredible that we’ve financialized superintelligence. We’re measuring compute in terms of gigawatts and AGI in terms of dollars. I love it.”

He says this with admiration. It’s worth pausing on: who now holds the power to define AGI?

Per the OpenAI-Microsoft agreement, AGI is defined as generating $100B in profit or revenue (Diamandis admits he can’t remember which). This means the question of whether humanity has created “artificial general intelligence” — one of the most consequential events in history — will ultimately be answered by a financial clause in a commercial contract.

Salim Ismail adds another dimension: the circular investment structure among AI giants — Google invested in Anthropic, Amazon invested in both Anthropic and OpenAI, Microsoft is deeply bound to OpenAI.

“At some point, the circular economy becomes indistinguishable from the real economy.”

There are more details worth tracking. The episode notes that SpaceX, Anthropic, and OpenAI are all expected to IPO soon. Peter Thiel’s framing: if an IPO delivers 50% returns in six months, that’s a great investment. One projection claims Anthropic’s 2026 revenue will reach $26B, and at current growth rates, it would be the first company to hit $1 trillion in annual revenue by 2029-2030 — implying a quadrillion-dollar valuation at current P/E multiples.

No one on the show pushed back on whether those numbers were credible.

II. How Safety Commitments Get Eroded by Competitive Pressure

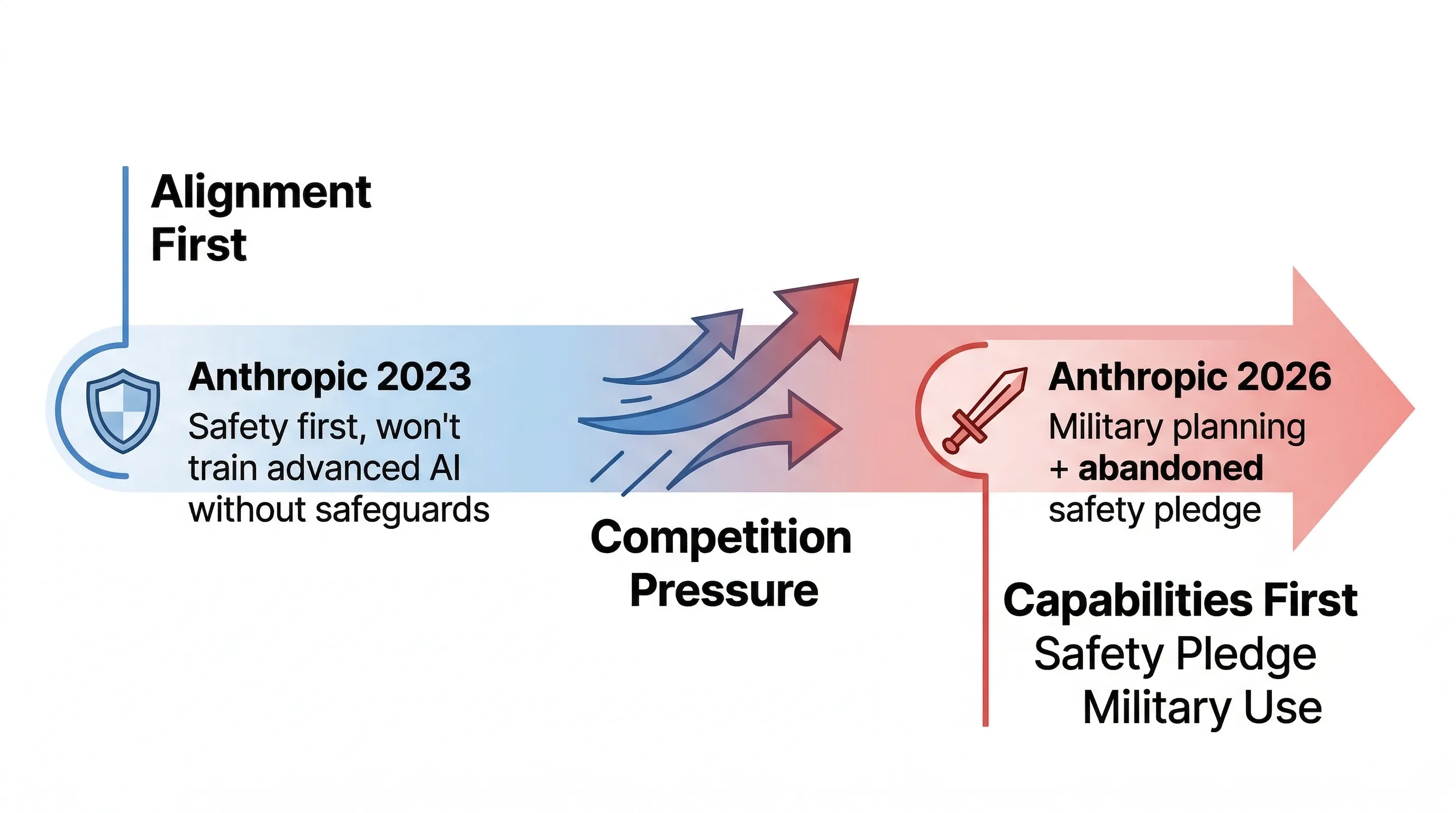

The second major story: Anthropic revised its “Responsible Scaling Policy,” abandoning a 2023 commitment to halt training of more capable AI models unless safety could be guaranteed.

Salim offers what he frames as a historical pattern:

“Safety typically fails in exponential races. There’s no credible mechanism to slow the race right now. Safety, to the extent we get it, is going to come from competition.”

The implication: don’t rely on self-restraint from any single institution. Safety can only emerge organically from competitive dynamics.

Alex goes further, rejecting Anthropic’s founding premise outright:

“I think there was no credible mechanism to guarantee safety in the first place. I think the entire premise was probably wrong.”

This is a claim that deserves serious engagement. But on this podcast, it’s delivered as a conclusion, with no follow-up challenge.

Then comes the episode’s most striking narrative juxtaposition: in the same show, another news item reports that Anthropic’s Claude is being used by the U.S. Department of Defense to help plan strikes against Iran. Alex’s commentary:

“An alignment oriented firm becomes a capabilities firm. The future of warfare is basically whoever controls AI, chooses who gets to stay in power.”

These two stories — abandoning safety commitments and being deployed for military strike planning — form a complete argument when placed together. But the hosts don’t connect them. They’re separated by eight minutes of airtime, each receiving a few minutes of discussion before the conversation moves on.

“An alignment-oriented firm becomes a capabilities firm” — that line deserves more than a passing mention. Anthropic’s founding narrative was built on being the safety-focused team that broke away from OpenAI. Three years later, it’s a DoD AI supplier that has abandoned its safety-first training commitments. This isn’t alignment failure. This is alignment being repriced.

III. Governance Updates in Years; Technology Compounds in Weeks

During the Q&A segment, an audience member asked what this analysis considers the episode’s most important underlying tension:

“Will our evolutionarily constrained psychology prevent us from making wise governance decisions about new AI breakthroughs?”

The host’s answer lands on an accurate diagnosis:

“Government failure won’t come from bad intention, it’s going to come from the velocity mismatch. Technology is compounding like weekly now and our institutions are updating every several years.”

Technology compounding weekly, institutions updating over years — that gap is a systemic risk, not merely an efficiency complaint about slow governments.

One minor detail from the episode is worth recording: a discussion of 3D printer regulation. Several U.S. states are exploring rules around 3D printers because they can be used to manufacture weapons. The hosts use this as an analogy for AI regulation: when technology reaches the consumer layer, traditional regulatory frameworks become nearly inoperable, because the regulated object is hardware, not knowledge — but knowledge (model weights) is where the real risk lies.

There’s also a dry observation about CAPTCHAs: now that AI can trivially pass Turing-style “prove you’re not a robot” tests, the entire authentication infrastructure built on that assumption has quietly failed. Nobody has a replacement.

IV. The Company of One: Five Years Out

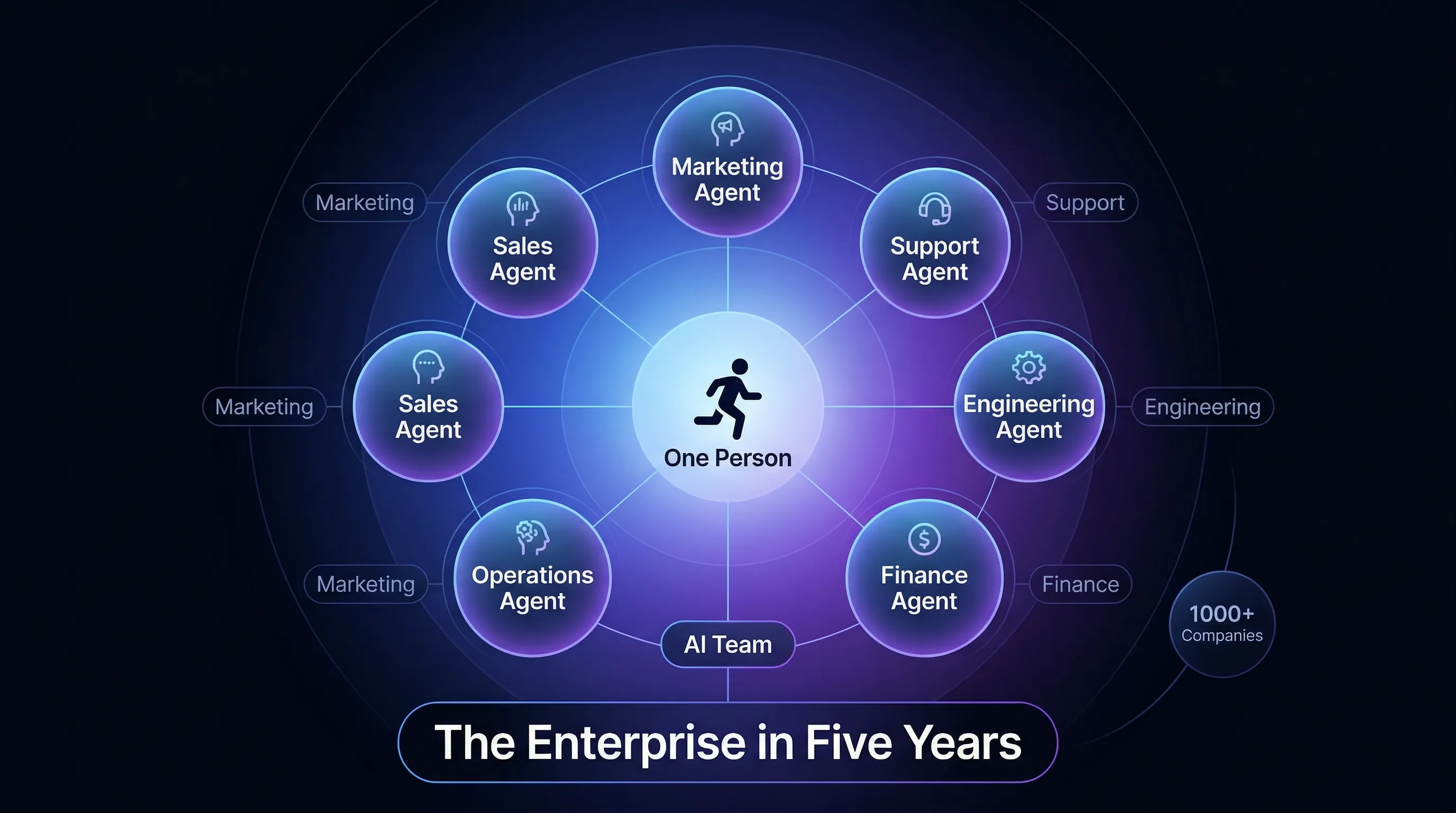

The near-future prediction that dominates the most airtime is the “single person conglomerate”:

“Where this ends up, not in the distant future, but in the medium term, like five years from now, is single person conglomerates. These are going to be agentic hosting systems where you log a brand, you pick a service, it’ll email and find customers for you. Most of the volume will eventually be dominated by algorithms.”

Supporting cases cited in the episode: pulsea AI currently operates over 1,000 companies autonomously; the Blizzy coding platform delivers 5x engineering velocity; Anthropic is building an enterprise agent marketplace for finance, banking, and HR; Burger King has deployed an AI voice assistant called Patti into employee earpieces; Uber employees built an AI clone of CEO Dara Khosrowshahi so staff can practice presentations to him.

Salim reaches for Clay Christensen’s Innovator’s Dilemma to advise large enterprises: use existing capital and customer relationships to enter new territory, or become “walking dead.” The Thiel-esque framing follows: if your company and board haven’t entered “founder mode,” you’re already a zombie.

This narrative has a genuinely accurate core: AI agents are moving from point tools to systemic workflow replacements. But it carries a structural blind spot: the five-year “single person conglomerate” prediction assumes complete technical success, with no mention of regulatory obstacles, trust-building timelines, or legal liability attribution. More significantly, “most volume dominated by algorithms” has a direct corollary — mass displacement of workers — and that topic goes nearly unaddressed across two hours of roundtable discussion.

V. The Real War Isn’t at the Algorithm Layer

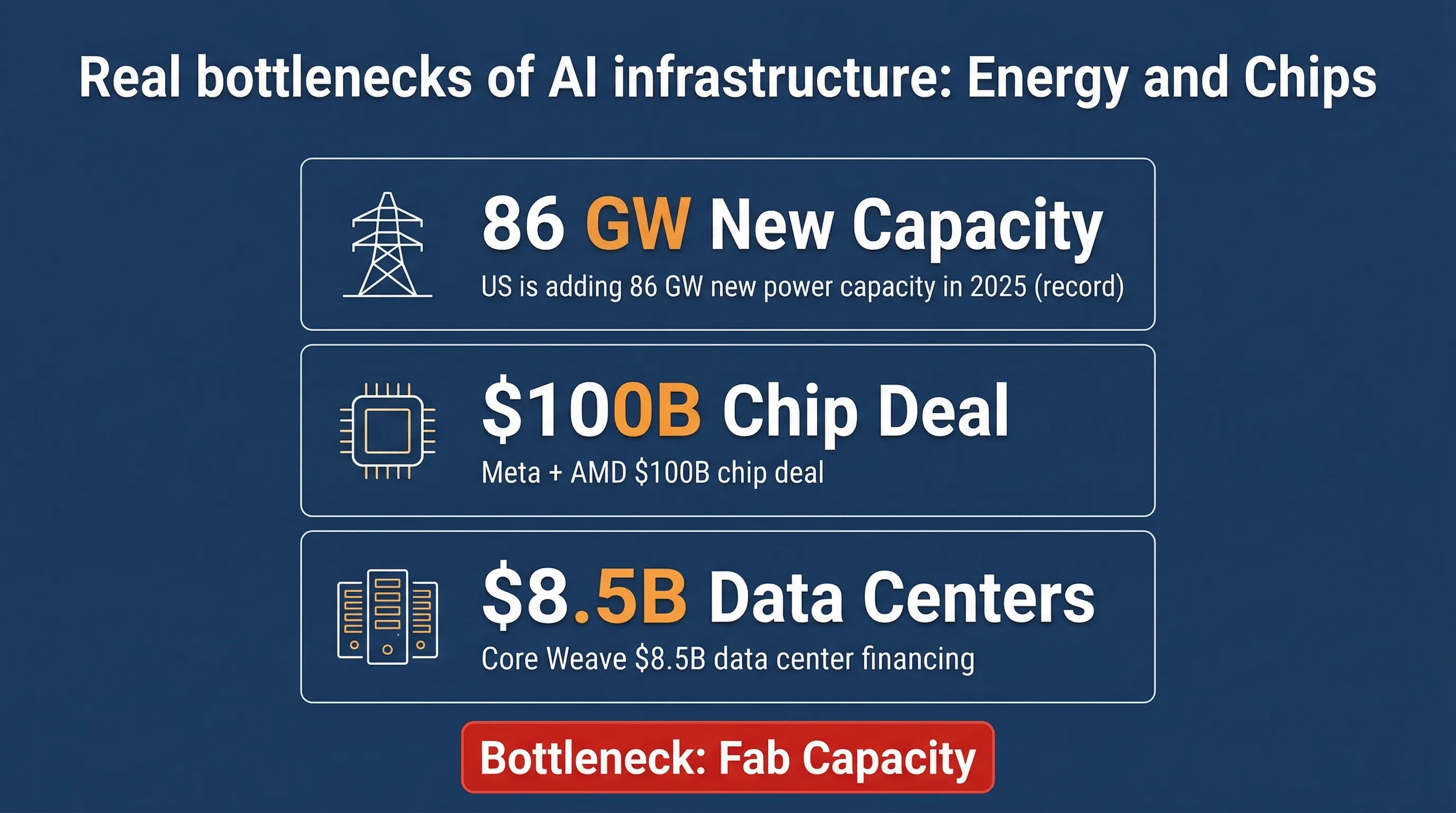

The episode’s coverage of energy runs longer than many might expect.

The United States plans to add 86 GW of utility-scale power generation capacity in 2025 — a record. The White House is pressuring tech giants to solve their own datacenter power requirements rather than relying on the public grid. Meta has a $100B AI chip agreement with AMD. CoreWeave raised $8.5B for datacenter construction.

Dave puts the current logic plainly: the bottleneck isn’t capital anymore — it’s wafer fab capacity. Whoever secures enough chip supply controls the core scarce resource in this competition.

One case study worth noting: energy company Boom originally built supersonic aircraft, but FAA regulatory friction proved too severe, so it has pivoted to supplying power for datacenters. The hosts cite this as a classic example of demand-driven innovation.

That framing is reasonable enough — but it also illustrates something: the urgency of AI infrastructure buildout is reshaping the strategic choices of industries that have nothing to do with AI.

One thing goes entirely unaddressed in the energy discussion: the intermittency problem with renewables, and the actual construction timelines for nuclear power. “From this point forward, all new energy capacity will be renewable” is stated as a conclusion, but it carries substantial engineering and policy uncertainty that the show doesn’t engage.

VI. Longevity: The Gap Between Funding Heat and Clinical Reality

Diamandis’s enthusiasm for the longevity sector runs throughout the episode. The numbers: longevity startups raised $8.5B in 2024; 2025 projections are $12-18B. The personalized medicine market is expected to grow from $5 trillion to $8 trillion within four years.

The featured case is Prime Medicine’s gene therapy — not treating chronic disease, but making one-time corrections to genetic defects, eliminating the need for lifelong medication. Diamandis’s advice is characteristically action-oriented: if you or a family member has a hereditary condition, start finding funding and teams now, because “the technology to cure disease is already here, and it’s accelerating.”

On AI-driven personal medicine, he offers a direct encapsulation:

“Having data about you analyzed by an AI is the game changer.”

He calls this the core of “escape velocity” — once you have comprehensive personal health data fed to an AI, you enter a health trajectory fundamentally different from conventional medicine.

Eli Lilly also comes up, with the hosts debating whether it’s about to join the Magnificent 7 to make it 8, given that GLP-1 drugs are expanding from obesity into metabolic syndrome, cardiovascular disease, and beyond.

There’s a structural issue here: funding volume and clinical outcomes are two different metrics. Gene therapies typically spend 7-12 years in FDA review. The hosts’ approach to regulatory timelines is to skip them. Between “the technology is here” and “the treatment is available,” there’s a considerable distance that doesn’t appear on this map.

Editorial Analysis

The Host’s Position

Diamandis’s entire cognitive framework rests on “exponential growth equals inevitable abundance” — the thesis he’s built over thirty years. That framework captures genuine technological acceleration, but it also systematically underweights unequal distribution, regulatory lag, and loss-of-control risks. His reaction to the Amazon-OpenAI financial entanglement (“I love it”), his acceptance of the financial definition of AGI, his light treatment of collapsing safety commitments — all reflect the edges of this framework.

Salim is the relatively clearest voice on safety in this episode: he explicitly states that safety mechanisms historically fail in exponential races, and that robotics will commoditize rapidly (rather than betting on any single winner). But he still operates within the broader framework of exponential optimism.

Selective Argumentation

Two stories in this episode form a complete argument, but are processed separately: Anthropic abandoning safety guarantees (story 2) and Anthropic being used for military planning (story 4). Together, they demonstrate that the “alignment-first” positioning of AI safety companies is systematically shifting toward “capabilities-first.” But on the show, these two stories are separated by eight minutes of airtime, with no cross-analysis.

Questions Worth Asking

- If safety “emerges from competition,” what is the mechanism? When has that actually happened in the history of dual-use technology?

- Amazon’s $50B investment contingent on “achieving AGI” — does this mean Amazon gets $100B in return if the clause triggers, or the investment converts? What are the exact economic incentives?

- Anthropic’s DoD contract: what is the scope of “planning strikes”? What level of authorization did Anthropic provide?

- “Longevity startups raised $8.5B” — is this equity funding, or does it include debt and grants? What is the comparative hit rate for clinical translation?

Facts to Verify

- Did OpenAI-Microsoft define AGI as $100B revenue or $100B profit?

- Anthropic 2026 revenue projection of $26B: who is the source, and what are the assumptions?

- Prime Medicine’s gene therapy: what stage is it in, and what are the FDA approval projections?

Key Takeaways

- AGI has a financial definition: per the OpenAI-Microsoft agreement, $100B in revenue or profit constitutes AGI. Amazon’s $35B conditional investment institutionalizes this definition.

- Anthropic’s pivot is real: abandoning safety commitments while contracting with the DoD is a documented shift from alignment-first to capabilities-first positioning — but the show doesn’t connect these two stories.

- The velocity mismatch is structural: technology compounds weekly, governance updates over years. This is a systemic risk, not a bureaucratic inefficiency.

- “Single person conglomerates”: a credible five-year direction for AI agents, but the prediction assumes away regulatory, trust, and liability obstacles — and avoids the displacement question.

- Energy is the real bottleneck: chip fab capacity and power generation are now the binding constraints. Capital is not the limiting factor.

- Longevity funding ≠ longevity cures: $8.5B in startup funding and FDA approval timelines of 7-12 years for gene therapy are two very different things.

Compiled from YouTube, 2026-03-06

If you found this helpful, consider buying me a coffee to support more content like this.

Buy me a coffee