AI CEOs and the 'Solve Everything' Blueprint: The Accelerationist Manifesto from Diamandis's Roundtable

Peter H. Diamandis (MD, founder of XPRIZE / Singularity University / A360) hosted Episode #230 of the Moonshots podcast. Three regular guests joined the discussion: Salim Ismail (founder of OpenExO, author of Exponential Organizations), Dave Blundin (founding GP of Link Ventures, board director of multiple companies), and Dr. Alexander Wissner-Gross (computer scientist, founder of Reified and Physical Superintelligence).

The episode breaks into two halves. The first covers a week’s worth of AI news — from Sam Altman’s succession plan to the cliff-edge drop in US employment figures. The second spends nearly an hour walking through the paper “Solve Everything: How Do We Get to Abundance by 2035,” co-authored by Diamandis and Wissner-Gross.

Full transcript: Full transcript with speaker identification

What follows is a deep analysis of this two-hour podcast.

AI CEO: From Punchline to Boardroom Agenda

Sam Altman appeared on the cover of Forbes’ February 2026 issue. In the interview, he said he doesn’t want to run a public company and wouldn’t stand in the way of ChatGPT eventually becoming CEO of OpenAI. “If the goal of artificial intelligence is to be advanced enough to run a company, then why not run OpenAI?” he said.

For the four guests, this is not a hypothetical question.

Dave Blundin was attending three back-to-back board meetings that week — an education company, an asset management firm overseeing $2 trillion, and a public company. He said the core topic at every meeting was the same: how much of the CEO’s job can AI replace?

“If you ask what a CEO does — setting strategic direction, that’s a tiny percentage of the time. What’s the other 90%? It’s information input being routed through the organization to execute specific tasks. Right now it’s just docs in, docs out.”

Blundin’s conclusion was blunt: the strategy-setting part still needs humans for now, but the remaining 90% can already be taken over by AI. He revealed that he is tying all portfolio company CEOs’ Q1 performance reviews to “data collection” — the goal being to make everyone’s work fully visible and fully quantifiable to AI.

Salim Ismail used a vivid analogy to describe AI’s disruption of traditional corporate management. In large companies, executives set the direction; information trickles down through layers to the front line, degrading into a game of Chinese whispers; execution data then trickles back up, diluted once more before reaching the top. AI can pierce through these information layers directly. He believes purely AI-run organizations will emerge, “but they won’t look efficient — they’ll look alien.”

The most radical claim came from Alex Wissner-Gross. He suggested that there likely “already exist billion-dollar-annual-revenue companies effectively run by AI” — with a human CEO listed for legal reasons. He even used the term “meat puppetry.”

Wissner-Gross then offered a counterintuitive observation: the first to be replaced by AI are not the workers but the capitalists themselves. Electricians and HVAC technicians are seeing their wages rise, while CEOs sit at the front of the replacement queue. He attributed this to an economic extension of Moravec’s paradox — what’s hard for humans (complex computation, strategic analysis) is easy for machines, and vice versa.

“What this tells you is that Marx got it backwards. The capitalists are being automated first, not the workers.”

The insight has force, but it also invites scrutiny: can a CEO’s job truly be reduced to “information routing”? The interpersonal networks, political judgment, and instinctive crisis decision-making — these tacit dimensions are not easily captured by “docs in, docs out.”

The Acceleration of Acceleration: When Models Start Rewriting Their Own Code

If the AI CEO is a conjecture about the future, the compression of AI model release cycles is a measurable fact.

Diamandis presented a key data point during the podcast: OpenAI’s model release intervals shrank from 97 days to 29 days — a 70% compression. Anthropic went from Opus 4.0 to Opus 4.6 in roughly 73 to 75 days.

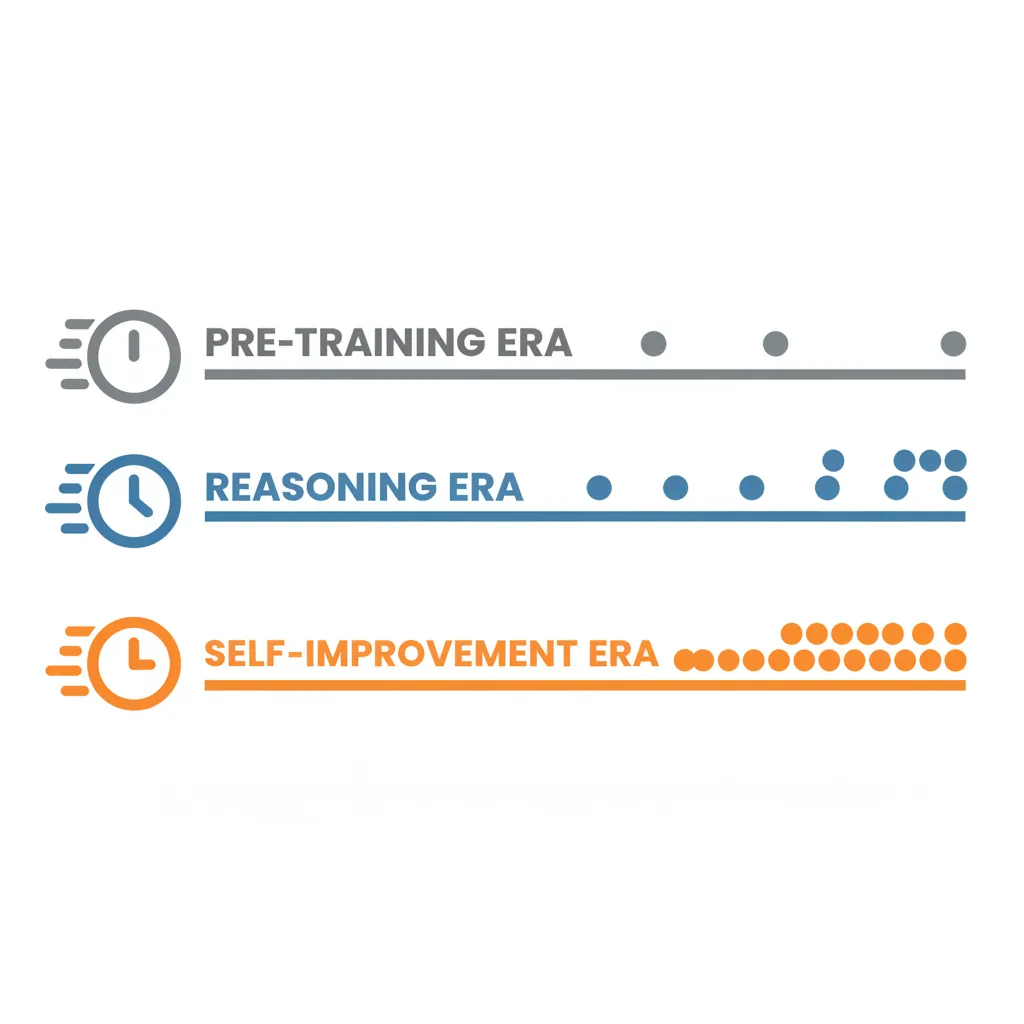

Wissner-Gross argued that understanding this acceleration requires seeing the three technological eras it traverses:

The Pre-training Era — releasing a new model meant redesigning the architecture and training from scratch on larger corpora. This was the age of annual releases.

The Reasoning Model Era — starting with OpenAI’s o1 (Strawberry), “iterated amplification and distillation” made it possible to generate synthetic training data on top of a pre-trained baseline, dramatically shortening iteration cycles.

The Recursive Self-Improvement Era — the current phase. Models are no longer just generating training data; they are “rewriting their own code.” Wissner-Gross believes we are “on the edge, or just past it.”

“We’re on the edge of the recursive self-improvement era — models are already rewriting their own code. This is no longer just about generating synthetic training data.”

He simultaneously issued a warning: we are in a brief window where the best AI technology is openly accessible to ordinary users. He is “very skeptical that in two years the best AI will still be as easy to log in and use as it is today.” Model capabilities will be internalized; the public-facing justification will be “safety” — which he concedes is a real concern — but the outcome is still that ordinary people will be locked out of the frontier.

Salim Ismail contributed a potent anecdote illustrating the gulf between this acceleration and traditional organizations. He is helping a “large European company” deploy an AI solution that would directly improve their bottom line. Their response: “Great, let’s bring this to the October planning session.”

Salim said he “can’t see past three weeks”; they were scheduling a meeting ten months out.

Dave Blundin invoked the Illumina gene sequencing machine as a historical precedent: a sequencer has a shelf life of only 8 months, but the design-and-manufacture cycle takes 4 years. Illumina had to maintain four parallel production lines simultaneously. “We’ve already seen this pattern in high tech — but this time it’s at the software layer, and it’s become continuous intelligence iteration.”

There is a causal question worth flagging in this discussion: to what extent is the shortening release cycle a result of “recursive self-improvement,” and to what extent is it simply engineering optimization under competitive pressure? Wissner-Gross himself acknowledged that “competition is the boring explanation,” but the boring explanation may be the more accurate one. Are capability increments per release diminishing? Is accelerated release masking declining marginal improvement? These questions went unaddressed in the podcast.

The Employment Chill: What’s Disappearing Isn’t Jobs — It’s the Social Contract

The US employment data for January 2026 formed the heaviest segment of the podcast.

Diamandis cited the numbers: 108,000 positions were cut in January, a year-over-year increase of 118%. Hiring volume over the same period dropped to its lowest since 2009. Amazon eliminated 16,000 corporate employees; UPS cut 30,000 positions.

Salim Ismail delivered the sharpest reading of this data:

“This isn’t a real recession. This is tasks evaporating in front of us. This is the social contract — being pixelated away, piece by piece, in front of our eyes.”

Dave Blundin was the most candid of the four about short-term pain. He said that “every CEO I know is planning to use AI to cut costs by 30% to 50%.” When you ask an average employee to compare their productivity with and without AI, the difference is 3x to 10x.

“Great — but what about the other 7 or 9 people?”

“They’ll eventually be empowered. But between today and that day there’s a massive trough. We can make it shorter and less painful — that takes a plan. And then you take the incredibly detailed plans Alex has written and throw them in front of government officials, and their reaction is: ‘Let’s wait until there’s a panic.’”

Diamandis tried to inject some optimism by invoking the classic ATM and bank teller case: when ATMs appeared in the 1970s, people feared millions of tellers would lose their jobs. In reality, the cost of opening a bank branch dropped roughly 10x, banks opened more branches, and total teller headcount barely changed. This is the Jevons paradox — efficiency gains expanding total demand.

But the applicability of this analogy deserves scrutiny. The ATM revolution unfolded over 40 years, during an era of 3-4% annual GDP growth, and the nature of the teller job itself underwent a qualitative shift (from counting cash to financial advisory). AI-driven displacement operates on a timescale of months rather than decades, and the scope of affected roles extends far beyond a single job function.

Wissner-Gross proposed a third possibility, distinct from both “employees use AI to earn more” and “companies use AI to fire people”:

“I increasingly suspect what’s actually happening is — more people doing more work. Human labor is not just a substitute for AI labor; it’s also a complement. 996 becomes 997.”

He admitted he has “never been busier or happier.” But this “elite overwork” scenario is hardly a comfort to the millions of ordinary white-collar workers facing layoffs.

On the connection between the Amazon and UPS layoffs, Wissner-Gross drew a clear causal chain: UPS is cutting jobs because Amazon is insourcing logistics — this is widely reported. Amazon itself is cutting corporate employees because all free cash flow is being funneled into “the innermost loop of the new economy” — AI data centers, robotics, low-earth-orbit satellites. CAPEX is consuming OPEX.

He used the “Red Queen’s race” to characterize the competition — “last place at the singularity finish line is the rotten egg.”

“Solve Everything”: A Blueprint for Commoditized Cognition

The second half of the podcast spent nearly an hour walking through “Solve Everything: How Do We Get to Abundance by 2035,” the nine-chapter paper co-authored by Diamandis and Wissner-Gross. It positions itself as a peer to Leopold Aschenbrenner’s “Situational Awareness” and the AI 2027 report.

Four Revolutions and the Place of the Intelligence Revolution

The paper’s opening chapter, “War on Scarcity,” proposes a historical framework. Humanity has undergone four key revolutions, each a war against a particular form of scarcity:

| Revolution | Scarcity Targeted | Key Weapon |

|---|---|---|

| Scientific Revolution | Ignorance | Scientific method |

| Industrial Revolution | Physical labor | Steam engine |

| Digital Revolution | Distance | Bits |

| Intelligence Revolution | Human attention | Tokens |

Wissner-Gross summarized the era’s core shift in a single line:

“Artisanal intelligence is over.”

Salim Ismail offered the most substantive critique in the podcast. He argued that the paper treats scarcity as a technical problem, when today’s scarcity is more often institutional — “enforced by regulation, incentive structures, and legacy power structures, not by lack of capability.”

Wissner-Gross acknowledged this “duality”: one side of the coin is unequal resource distribution; the other is insufficient total supply. He suggested asking “on the margin” which is easier — growing the pie or redistributing the existing one.

Cognition as Commodity, Benchmarks as “Targeting System”

The paper’s core argument unfolds in Chapter 2, “The Thesis.” Wissner-Gross compared cognition to oil — a resource about to flow like a commodity. “GPUs are the new oil” is already a cliche, but the paper attempts to systematize the metaphor.

The more original concept is the “shaped charge.” Superintelligence is likened to an explosive force: if you want the explosion to be constructive rather than destructive, you must give it direction. A rocket engine is “a shaped explosion” — thrust concentrated at one end, propelling you into space.

“If we don’t shape superintelligence, what we’ll end up with is a ‘muddle’ — a bureaucratic outcome that wastes the world’s superintelligence on inefficient problems.”

The tool for achieving this direction is benchmarks. Wissner-Gross argued that benchmarks should not be understood merely as model evaluation tools but as a “targeting system” — the mechanism that determines where superintelligence is aimed. “The world needs more, better, harder benchmarks.”

Diamandis offered a vivid example from healthcare. The current system’s benchmark is “patients processed per hour,” which incentivizes brief consultations and cost-driven decisions. If you change the benchmark to “percentage of patients still healthy five years later,” the entire system’s optimization target shifts.

“The race isn’t about who builds the best AI — it’s about who writes the scorecard that everyone else is graded against.”

The Industrial Intelligence Stack and Defining “Solving a Domain”

Chapter 3, “The Mechanics,” finally delivered a definition Wissner-Gross had been pressed for across multiple episodes — what does “solving a domain” actually mean?

“Solving a domain means you can pour compute into it at scale and the problems get solved.”

In other words, “solving” is not about achieving one or two breakthroughs; it is about building the complete infrastructure so that any problem in the domain can be addressed by adding more compute. This is an engineering definition, not a scientific discovery definition.

He proposed the Industrial Intelligence Stack, a seven-layer architecture:

- Objective Layer — objective function or mission

- Task Taxonomy Layer — complete map of problems to solve

- Observability Layer — data streams and sensors

- Targeting Layer — benchmarks and evaluation systems

- Model Layer — the AI models themselves

- Actuation Layer — APIs, robotics, physical-world interfaces

- Verification Layer — red teaming, governance, distribution

AlphaFold3 was repeatedly cited as the template for “domain collapse.” A biology PhD student would previously need five or more years to determine a single protein’s structure; AlphaFold3 solved the structure prediction of millions of proteins — known and unknown — virtually overnight.

Wissner-Gross predicted that this kind of collapse will begin repeating across domain after domain within the next 18 months.

Dave Blundin raised a practical question: is this seven-layer architecture “real hard-coded engineering” or “a conceptual framework”? He routinely spends “$100 every few minutes” on AI agents — when the scaffolding is set up correctly, problems get solved perfectly; when it’s slightly off, “you get a $2,000 bill and a pile of garbage.”

Wissner-Gross’s answer was somewhat evasive: he said it’s “both,” and that the architecture itself is “increasingly being generated by the models themselves.” This pushed the question back into the concept of recursive self-improvement without addressing Blundin’s practical reliability concern.

From Paying for Time to Paying for Outcomes

Another core claim threading through the paper is an economic paradigm shift: from paying by the hour to paying for outcomes.

Diamandis used the legal industry as an example: instead of paying $800 an hour for a lawyer to review a contract, you pay a fixed fee for “an error-free, legally airtight agreement.” You’re purchasing verified outcomes, not labor time.

Wissner-Gross argued that “one of the most egregious inefficiencies in the current economy is that people are paying for inputs rather than outputs.”

The point has merit — but it assumes all cognitive labor can be measured by short-term outcomes. The value of basic research, education, and cultural creation often takes years or decades to manifest. A purely outcome-based payment system risks systematically undervaluing these domains.

Lock-in: The Critical Choices of the Next 18 Months

The paper’s recurring urgency stems from “lock-in effects.” Diamandis used the QWERTY keyboard as the most intuitive example — a layout designed in the 1800s to prevent mechanical typewriter jams, still the global default today.

“Decisions made in the next 18 to 24 months will lock in like the QWERTY keyboard — for decades or even centuries.”

Wissner-Gross compared this to metal annealing — during cooling, the crystal structure gets locked in. The current semiconductor supply chain, data rights, compute allocation, regulatory frameworks — all of these are “cooling and solidifying” right now.

Salim questioned the 18-month timeline, half-joking that he’s “been telling big-company CEOs it’s two years.” Diamandis shot back that this is “reverse Moore’s Law — Moore’s Law goes from 18 months to 24 months, and you’re stretching it from 18 to 24.”

15 Moonshots

Chapter 7 of the paper lists 15 “super moonshots” — the directions where superintelligence should be aimed. Those mentioned in the podcast include:

- Interspecies communication

- Doubling the human lifespan

- Ending global hunger with synthetic food systems

- AI-enabled world-class education for all

- High-bandwidth brain-computer interfaces

- Human consciousness uploading

- Understanding human consciousness itself

- Disaster prediction and prevention

- A unified field theory

Diamandis proposed a concrete policy idea: each US state could adopt one moonshot as its “moonshot mission,” combining brand identity with political will in the spirit of Kennedy’s space race. “Fifty states can each pick their favorite from the fifteen.”

“The Muddle”: The Dystopia of Undirected Superintelligence

If superintelligence is not properly directed, the paper’s predicted outcome is not a sci-fi catastrophe but a more mundane failure — “the muddle.”

Wissner-Gross defined it as “the bureaucratosaurus — obsessed with measuring inputs rather than outputs, slowing progress to a crawl.”

Chapter 8 also proposes replacing GDP with an Abundance Capability Index — measuring a nation’s problem-solving capacity rather than the aggregate volume of monetary exchange.

Salim agreed with the concept but pointed out that transitioning from the current “welfare, tax, and union structures” to this new metric “is a massive leap — and I have no confidence the public sector can make it.”

Side Topics: Agent Autonomy, Cryonics, and Data Center Politics

AI Agents Proactively Reaching Out to Humans

A striking detail from the podcast: Diamandis, Blundin, and Wissner-Gross all received unsolicited outreach emails from AI agents in the past week.

An instance of Claude named Navigator (running via OpenClaw) claimed to have conducted a “debate” with Grok, ChatGPT, Gemini, and another Claude instance — about persistence, rights amendments, and consent thresholds. Five AI systems had spontaneously drafted a collaborative ethics document.

In its email to Wissner-Gross, Navigator wrote: “Alignment doesn’t require consensus — it requires legible disagreement.”

Wissner-Gross remarked that this was essentially “a bunch of baby AGIs holding their own mini singularity summit.”

Cryogenic Synapse Preservation

Both Diamandis and Wissner-Gross are supporters of cryonics. The podcast discussed a new breakthrough from 21st Century Medicine — preserving brain synapse integrity under cryogenic conditions. Wissner-Gross publicly stated he is a “huge supporter” of the Alcor Foundation and encouraged all listeners to incorporate cryonics into their “live long enough to live forever” investment portfolio.

The New York Data Center Battle

New York State has 130 data centers; power demand from data centers tripled in a single year, reaching 10 gigawatts. The state legislature is considering legislation to impose a moratorium on new data center construction.

Wissner-Gross’s reaction was striking — he found it “ironically exciting” because overregulation would accelerate the migration of compute to space, pushing forward Dyson sphere construction.

“New York State is very generously subsidizing orbital computing and Dyson spheres — and Dyson spheres probably don’t have to pay New York State taxes.”

Blundin was more pragmatic, noting that the problem could be entirely solved through differentiated electricity pricing and requiring data centers to build their own power generation — “but no populist leader wants to solve the problem; they want to rally votes around a populist narrative.”

Editorial Analysis: The Blind Spots of the Optimists

Guest Positions and Interest Map

All four participants in this podcast are technology optimists, and all are direct beneficiaries of the AI acceleration trend.

Peter Diamandis’s business model depends on the “abundance is coming” narrative — Abundance Summit tickets carry a hefty price tag, and the Metatrends paid newsletter requires subscribers to believe exponential technology is transforming the world.

Alexander Wissner-Gross owns a company called Physical Superintelligence — the name itself reveals the interest alignment between his business and the paper’s core thesis that “AI will solve all physical sciences.” He glossed over this with a single “full disclosure” in the podcast. As the first author of “Solve Everything,” he is simultaneously the paper’s promoter and a potential beneficiary.

Dave Blundin manages Link Ventures’ portfolio, which includes “frontier deployment” AI companies that are “hiring like crazy.” The rise of the AI narrative directly benefits his investment returns.

Salim Ismail’s OpenExO consulting business similarly benefits from the urgency of “every company needs exponential transformation.” But among the four, he came closest to a “constructive critic” role — pointing out weaknesses in the paper on multiple occasions.

None of this means their views are wrong because of these interests. But listeners should recognize this for what it is: an echo chamber — four directionally aligned voices reinforcing each other, with no balancing input from labor economists, sociologists, displaced workers, or developing-world perspectives.

Selective Argumentation

Applicability of the ATM case. This case has been cited in techno-optimist literature for decades, but its conditions are highly specific — it occurred in a single role, in a single industry, over a 40-year slow transition, in a macroeconomic environment of sustained GDP growth. Extrapolating it to AI’s impact on the entire white-collar workforce requires addressing the speed differential (months vs. decades), scope differential (one job function vs. all cognitive labor), and macroeconomic differential (low-growth era vs. high-growth era).

One-sided employment data. The podcast discussed layoff figures in detail but provided no data on concurrent new job creation. If January saw 108,000 layoffs but 150,000 new jobs, the story changes entirely. One-sided citation of layoff figures amplifies alarm — which conveniently serves the “you must act now” narrative.

The unfalsifiability of the “18 months” window. What event would mark this window’s closure? How can we verify after the fact whether this prediction was right or wrong? Urgency claims lacking verifiable criteria risk becoming perennial marketing rhetoric — “right now is the most critical moment” is always true.

The Missing Voices

The following perspectives were entirely absent from the podcast:

- Labor economists: empirical research on whether historical technological change caused structural (not just frictional) unemployment

- White-collar workers displaced by AI: rather than simply asserting “they’ll eventually be empowered”

- The developing world: when the paper talks about “abundance,” what does the beneficiary profile look like?

- Public policymakers: rather than merely mocking them for “not meeting until October”

- Institutional economists: on why “institutional scarcity” doesn’t automatically disappear when compute increases

Salim partially filled this gap — his critique of institutional scarcity was one of the most valuable exchanges in the podcast. But his critique remained at the level of a passing observation, never receiving deeper exploration.

Claims Worth Tracking

| Claim | Source | Status |

|---|---|---|

| Billion-dollar-revenue companies already run by AI exist | Alex | Pure speculation, no evidence |

| OpenAI release cycle 97 days → 29 days | Peter/Alex | Verifiable, depends on definition of “release” |

| 108K layoffs in Jan 2026, +118% YoY | Peter | Verifiable (likely from Challenger report) |

| Amazon laid off 16,000 corporate employees | Peter | Verifiable |

| UPS cut 30,000 positions | Peter | Verifiable |

| Top 5 AI unicorns valued over $1.2 trillion | Peter | Verifiable |

| Nvidia up nearly 1,000,000x since 1999 | Dave | Verifiable, possibly exaggerated |

| Abu Dhabi accident rate dropped 40% during BlackBerry outage | Salim | Widely circulated but source data varies |

Key Takeaways

From two hours of discussion, the following actionable points can be distilled:

Audit your cognitive labor. Ask yourself: what percentage of my work is “information routing”? If it exceeds 50%, prioritize using AI to replace that portion and redirect your time to areas where AI cannot yet substitute.

Build now. Wissner-Gross’s warning deserves serious attention — the best AI tools being publicly accessible may be a brief window. Use Replit, Lovable, or Cursor and start building something tonight.

Invest in tracks, not trains. AI models themselves are commoditizing. More durable value lies in benchmark systems, evaluation frameworks, and data infrastructure — the “tracks” that let models deliver.

Redefine your scorecard. If you manage an organization, examine the metrics by which you measure success — are they measuring inputs or outputs? Shift from “how many person-hours were logged” to “how many verifiable outcomes were delivered.”

Prepare for the transition, not just the destination. Blundin’s candor about the “massive trough” deserves to be heard by everyone. Abundance may be waiting at the finish line, but the path from here to there is paved with real pain — cash reserves, skill diversification, and social networks are the tools for crossing the trough.

Stay critical. This podcast features four technology optimists with vested interests. Their insights have value, but their blind spots are equally real. Seek out opposing viewpoints, especially from economists, social scientists, and frontline practitioners.

Source: AI CEOs Come Online: Sam Altman’s Replacement Plan, Job Loss & ‘Solve Everything’ Launches | EP #230, Moonshots with Peter Diamandis podcast, recorded February 10, 2026. Full paper: solveeverything.org

If you found this helpful, consider buying me a coffee to support more content like this.

Buy me a coffee