Gemini Now Uses WeatherNext 2: You Might Have the World's Most Accurate Weather Forecast

Here’s something nobody has talked about.

Open Gemini right now and ask “What’s the weather like tomorrow?” The forecast you get back isn’t from a traditional weather data API anymore — it’s generated by WeatherNext 2, Google DeepMind’s most advanced AI weather forecasting model.

Google didn’t make a big deal out of it. In November 2025, they quietly announced on their official blog that WeatherNext 2 had been integrated into Google Search, Gemini, Pixel Weather, and Google Maps. Every time you ask Gemini about the weather, you’re getting forecasts from this model.

Three Generations: From Research to Product

WeatherNext 2 didn’t appear out of nowhere. It went through three generations of iteration:

| Model | Year | Breakthrough |

|---|---|---|

| GraphCast | 2023 | Graph Neural Networks for weather forecasting; 10-day forecasts surpassed ECMWF’s HRES for the first time |

| GenCast | 2024 | Diffusion model generating 50+ possible weather trajectories; outperformed ECMWF ENS on 97.2% of targets (Nature paper) |

| WeatherNext 2 | 2025 | New FGN architecture, 8x faster, outperforms predecessors on 99.9% of metrics |

Each generation solved its predecessor’s bottleneck: GraphCast could only give deterministic answers, GenCast could give probability distributions but was slow, WeatherNext 2 is both fast and accurate.

Why It’s More Accurate Than Traditional Forecasting

Traditional weather forecasting runs on Numerical Weather Prediction (NWP) — simulating atmospheric motion with physics equations. This approach has been used for decades, but has two fundamental problems:

First, discretization errors accumulate. The atmosphere is inherently a nonlinear chaotic system. NWP must discretize continuous physics equations onto a grid, which inevitably introduces systematic errors that grow exponentially over the forecast horizon.

Second, compute is a hard bottleneck. ECMWF’s HRES system needs hundreds of supercomputer nodes running for hours to produce a single 10-day forecast. This limits how many forecasts can run per day and constrains resolution.

WeatherNext 2 takes a different path — instead of simulating physics, it learns weather evolution patterns directly from 40 years of historical weather data.

Its core architecture is FGN (Functional Generative Network), a Graph Transformer-based generative network:

- 180 million parameters, 24 Transformer layers, 768-dimensional latent space

- 0.25° global grid, covering 6 atmospheric variables at 13 pressure levels + 6 surface variables

- 6-hour timesteps, generating complete 15-day global weather trajectories

- Under 1 minute on a single TPU v5 (traditional supercomputers take hours)

How It Handles Uncertainty

The core challenge of weather forecasting is uncertainty. The same initial conditions can lead to completely different weather outcomes.

WeatherNext 2 separates uncertainty into two types, handling each differently:

Epistemic uncertainty — from limited data and imperfect learning. Solution: train 4 independently initialized models as a Deep Ensemble, each with 180M parameters.

Aleatoric uncertainty — inherent atmospheric randomness that cannot be eliminated. Solution: inject noise through Functional Perturbations. A single 32-dimensional noise vector influences the entire global field, and the model naturally learns to keep perturbations physically consistent — because that’s the most effective way to minimize error.

Each forecast generates 50+ ensemble members, each representing a possible weather trajectory. Instead of giving you a single temperature number, it tells you “70% chance of rain, 30% chance of clear skies.”

An Unexpected Discovery

The research team found a surprising property: the model was trained only on marginal distributions of individual variables, yet it learned to predict joint distributions across multiple variables.

In other words, during training you only teach the model “how temperature typically evolves” and “how wind speed typically evolves,” but it learns on its own that “when temperature changes this way, wind speed is likely to change that way.” These cross-variable physical correlations weren’t engineered — the model discovered them from the data.

How to Use It

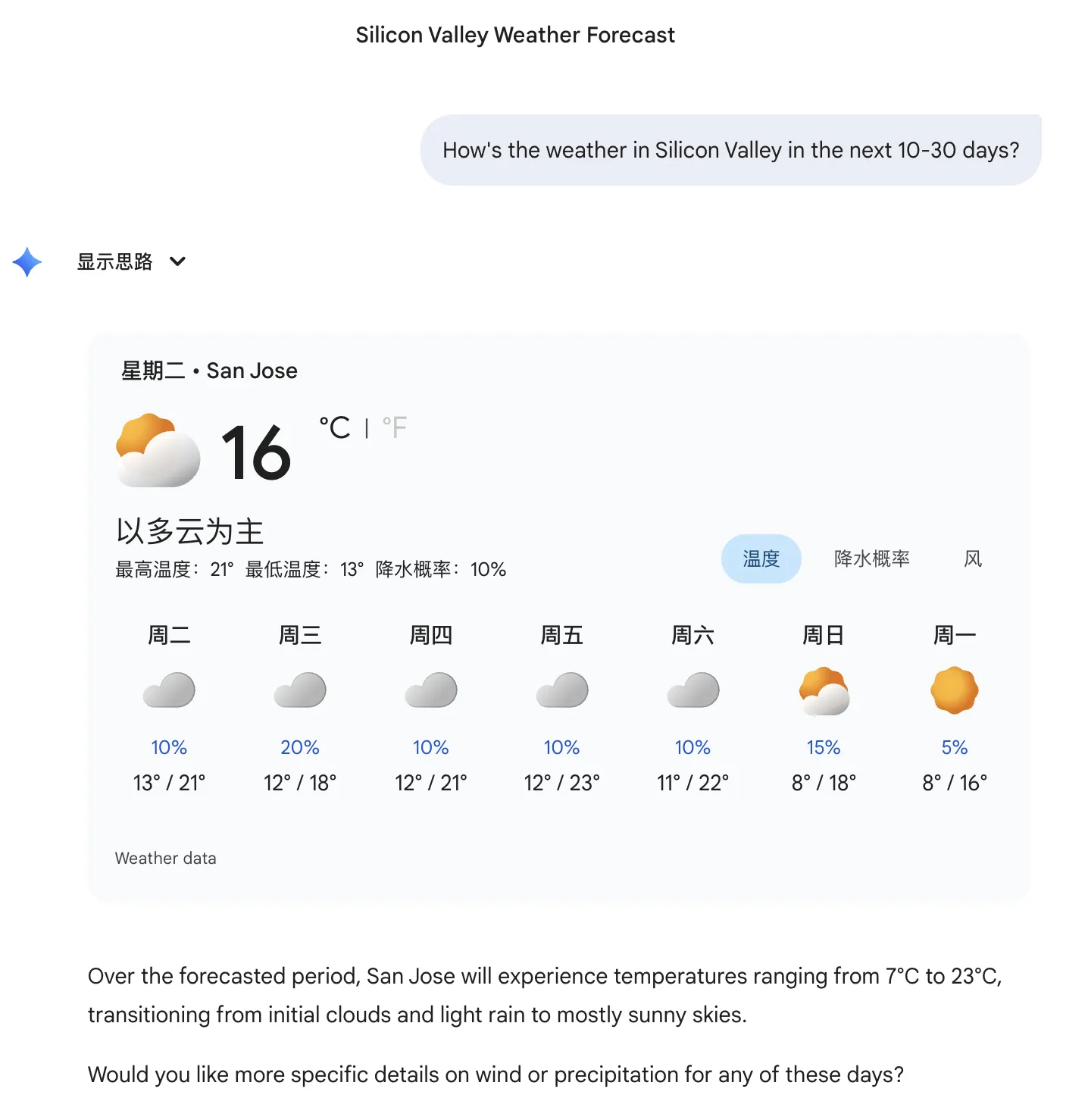

Open Gemini and just ask:

- “What’s the weather in Shanghai this weekend?”

- “Will it rain in Tokyo over the next 5 days?”

- “Is next week good for camping?”

Gemini calls WeatherNext 2’s forecast data to answer you. The same data also powers Google Search’s weather cards and the Pixel Weather app.

If you’re a developer, you can access the forecast data through the Google Maps Platform Weather API, Earth Engine, and BigQuery.

A quick aside: I asked Gemini directly “Are you using WeatherNext 2?” and it said no. This is actually understandable for two reasons:

- Training knowledge has a cutoff date. WeatherNext 2 was released in November 2025, and Gemini’s training data likely doesn’t cover that timeframe yet — so it simply “doesn’t know” it’s already using WeatherNext 2.

- Gemini isn’t great at searching to verify its own capabilities. While Gemini has search tools, when facing self-reflective questions like “what technology do you use,” it tends to rely on training knowledge rather than actively searching for the answer.

But according to Google’s official blog, WeatherNext 2 is indeed the backend engine powering Gemini’s weather features. That beautiful weather card you see when asking about weather? That’s WeatherNext 2 at work.

Links

If you found this helpful, consider buying me a coffee to support more content like this.

Buy me a coffee