How LLMs Got Faster: The Four Layers Behind GPT-5.3-Codex and Claude Fast Mode

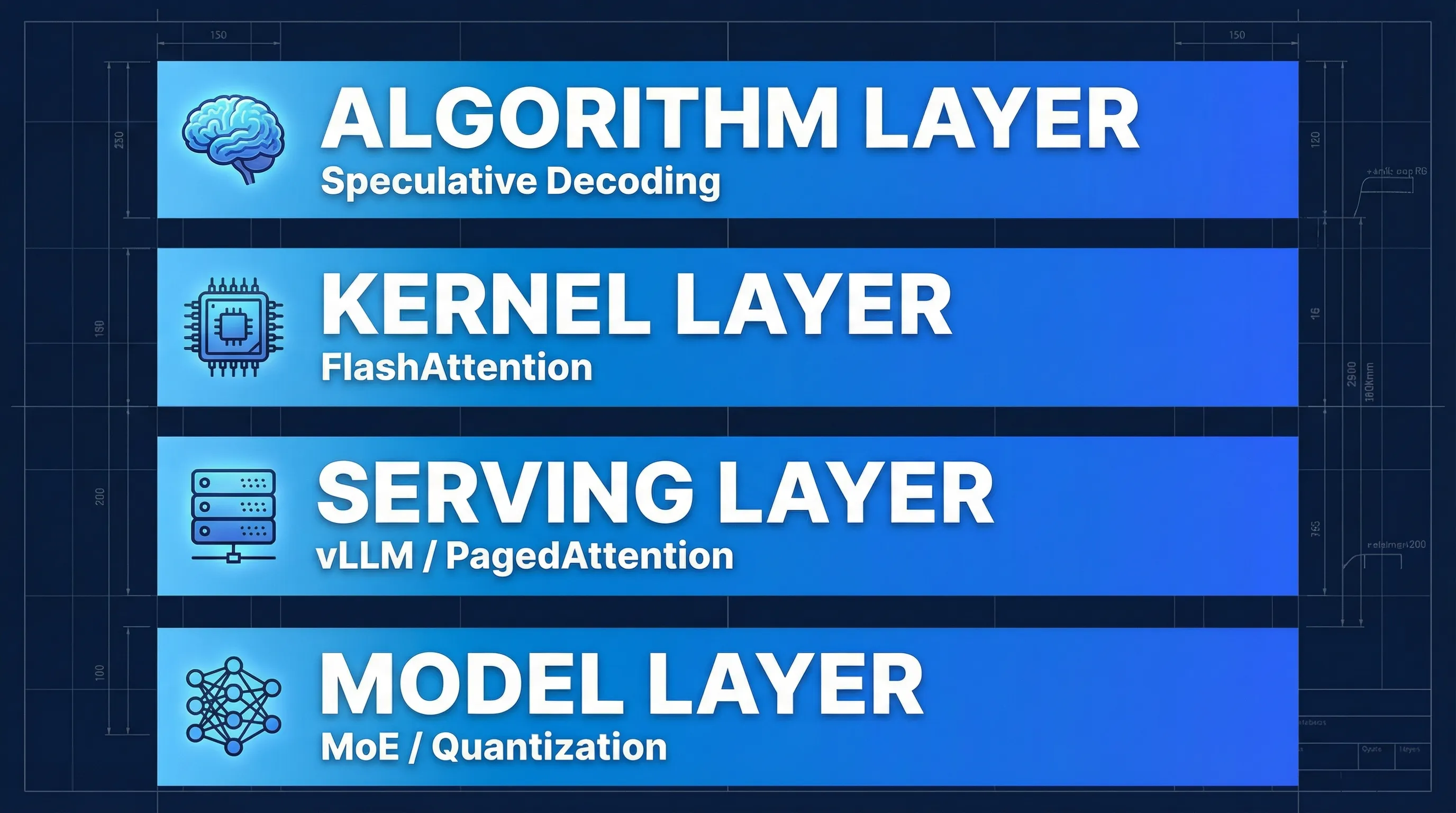

TL;DR: OpenAI and Anthropic both shipped major inference speed improvements in early 2026. GPT-5.3-Codex is ~25% faster; Claude Opus 4.6’s Fast Mode delivers up to 2.5x higher output token throughput. Despite different product packaging, both rely on the same four-layer acceleration stack: algorithms (Speculative Decoding), kernels (FlashAttention), serving systems (vLLM/Continuous Batching), and model architecture (MoE/quantization). This post breaks down each layer with 10 supporting papers.

Why LLMs Suddenly Got Faster

If you’ve been using Claude or GPT regularly, you’ve probably noticed: responses are noticeably faster.

It’s not your imagination. In early 2026, both major model providers shipped speed improvements almost simultaneously:

- OpenAI: GPT-5.3-Codex is ~25% faster than its predecessor

- Anthropic: Claude Opus 4.6 launched Fast Mode, with output tokens per second (OTPS) up to 2.5x higher

Interestingly, they took different product approaches – but the underlying technical principles are remarkably similar.

OpenAI’s Approach: Infrastructure + Inference Stack

OpenAI stated the numbers directly in the GPT-5.3-Codex announcement:

“…which is also 25% faster.” – OpenAI - Introducing GPT-5.3-Codex

The attribution:

“…thanks to improvements in our infrastructure and inference stack…”

That’s deliberately vague. OpenAI didn’t disclose which specific optimization techniques they used – whether it’s a particular Speculative Decoding variant, kernel-level improvements, or serving system upgrades. The System Card provides no additional technical detail on the speed gains.

This looks like a full-stack engineering effort rather than a single algorithmic breakthrough.

Anthropic’s Approach: Same Model, Different Inference Config

Anthropic was more transparent. Fast Mode isn’t a new model – it’s an accelerated inference configuration for Opus 4.6:

“Fast mode runs the same model with a faster inference configuration.” – Anthropic Docs - Fast mode

The key detail:

“There is no change to intelligence or capabilities.”

Same weights, same capabilities, just faster inference. The official claim is up to 2.5x higher output token throughput.

One nuance worth noting: Fast Mode primarily optimizes the output phase (OTPS), not necessarily time to first token (TTFT). For long-output tasks (writing code, generating articles), the speedup is very noticeable. For short replies, less so.

The Four Layers of LLM Speed

At a high level, LLM inference latency decomposes into three metrics:

| Metric | Meaning | Who cares |

|---|---|---|

| TTFT | Time to first token | Conversational UX, perceived responsiveness |

| OTPS | Output tokens per second | Throughput for long-output tasks |

| E2E | End-to-end latency | Agent workflows, total task time |

Making all three better simultaneously requires coordinated optimization across four layers.

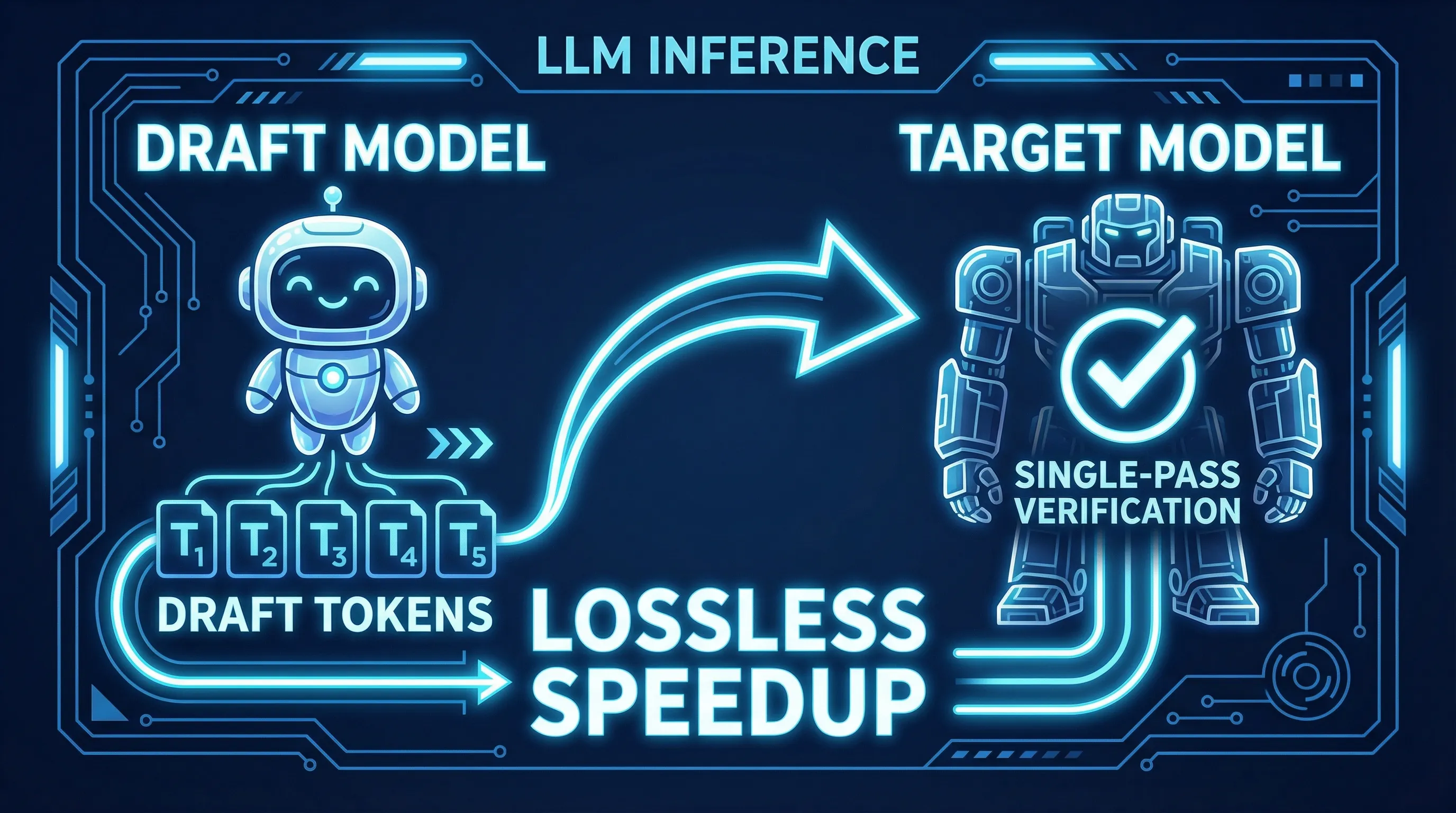

Layer 1: Algorithms – Speculative Decoding

This is the most elegant optimization in the stack.

Traditional LLM generation is strictly sequential: generate one token, run a full forward pass, generate the next. For large models, each forward pass is expensive.

Speculative Decoding flips this: a small “draft” model quickly proposes multiple tokens, then the large model verifies them all in a single forward pass. When the draft model guesses correctly (which happens frequently for predictable sequences), the large model effectively validates multiple tokens at the cost of one forward pass.

The beautiful part: output quality is mathematically identical. Failed tokens are discarded and regenerated. The verification scheme guarantees the output distribution matches the large model exactly.

Medusa and EAGLE pushed this further – multi-head parallel drafting for higher acceptance rates, dynamic draft trees that adapt to context, squeezing more speedup from the same principle.

Layer 2: Kernels – FlashAttention

Attention computation is the core of Transformers – and one of the biggest performance bottlenecks.

In naive implementations, attention intermediate results shuttle back and forth between the GPU’s fast compute units (SRAM) and high-bandwidth memory (HBM). HBM bandwidth is far slower than compute throughput, creating a severe IO bottleneck.

FlashAttention reorganizes the computation: keep attention operations in SRAM as much as possible, minimize HBM round-trips. This is a pure engineering optimization – the algorithm is mathematically identical, results are exact, but throughput improves dramatically.

FlashAttention-2 further optimized parallelism and work partitioning, with bigger gains on long sequences.

Layer 3: Serving – vLLM and Continuous Batching

The first two layers optimize “how to make a single inference faster.” This layer addresses “how to serve ten thousand users simultaneously.”

The core challenge is KV Cache management. Every user’s conversation maintains a KV Cache (key-value pairs from previous tokens), and long conversations can consume gigabytes of GPU memory. Traditional approaches pre-allocate fixed memory blocks, leading to massive waste.

vLLM introduced PagedAttention: manage KV Cache the way operating systems manage virtual memory – on-demand paging, dynamic reclamation. This multiplied memory utilization and directly translated to higher concurrent capacity.

The other key technique is Continuous Batching. Traditional batching waits for an entire batch to finish before starting the next. Continuous Batching lets requests join and leave batches dynamically, dramatically reducing GPU idle time.

Layer 4: Model Architecture – MoE and Quantization

The final layer works on the model itself.

MoE (Mixture of Experts) uses many “expert” sub-networks but only activates a few per token. Total parameters can be enormous (preserving capability), but actual compute per token is far less than a dense model of equivalent size. Switch Transformer pioneered this at scale.

Quantization (e.g., AWQ) stores model weights in lower precision (4-bit instead of 16-bit). This directly reduces memory footprint and bandwidth requirements, with minimal accuracy loss – imperceptible on most tasks.

What This Means for Developers

These four layers aren’t academic concepts. They’re already deployed in production, directly affecting your experience:

Agent workflows benefit most. Agents make multiple LLM calls per task. A 10-step agent workflow where each step is 2x faster is effectively 20x faster end-to-end.

Fast modes will become the default. When inference acceleration achieves “same capability, double the speed,” there’s no reason not to enable it by default. Expect more providers to ship similar products.

Cost and speed are converging. Faster inference = shorter GPU occupancy = lower cost. Every one of these four layers reduces both latency and cost simultaneously.

When evaluating models, look at OTPS, not just benchmark scores. As model capabilities converge, inference speed becomes a key differentiator.

Further Reading

The core papers supporting this analysis, organized by layer:

Algorithm Layer – Speculative Decoding

- Leviathan et al. – Fast Inference from Transformers via Speculative Decoding (ICML 2023)

- Cai et al. – Medusa: Simple LLM Inference Acceleration (2024)

- Li et al. – EAGLE: Speculative Sampling Requires Rethinking Feature Uncertainty (2024)

- Li et al. – EAGLE-2: Faster Inference of Language Models with Dynamic Draft Trees (2024)

Kernel Layer – FlashAttention

- Dao et al. – FlashAttention: Fast and Memory-Efficient Exact Attention (NeurIPS 2022)

- Dao – FlashAttention-2: Faster Attention with Better Parallelism and Work Partitioning (2023)

Serving Layer – Inference Systems

- Kwon et al. – Efficient Memory Management for Large Language Model Serving with PagedAttention (SOSP 2023)

- Yu et al. – Orca: A Distributed Serving System for Transformer-Based Generative Models (OSDI 2022)

Model Layer – Architecture and Quantization

- Lin et al. – AWQ: Activation-aware Weight Quantization (MLSys 2024)

- Fedus et al. – Switch Transformers: Scaling to Trillion Parameter Models (2021)

Sources: OpenAI GPT-5.3-Codex announcement, Anthropic Fast Mode documentation.

If you found this helpful, consider buying me a coffee to support more content like this.

Buy me a coffee