Anthropic Defies Pentagon, Outearns OpenAI in Enterprise: New Fronts in the AI Race

Hosts: Peter Diamandis (Founder, XPRIZE; Co-founder, Singularity University), Salim Ismail (Founder, ExO Works; Author, Exponential Organizations), Alex (Newsletter author) Source: WTF Moonshots #234 | Duration: 02:09:03 Full Transcript: Speaker-identified transcript

Introduction

Peter Diamandis has founded 17 companies. The XPRIZE Foundation he created has launched more than $300 million in prizes incentivizing technological breakthroughs. Salim Ismail authored Exponential Organizations and served as executive director of Singularity University, focusing on how large institutions adapt to exponential technology disruption. Alex writes a newsletter on AI policy and industry dynamics, describing his content as almost entirely hand-written.

WTF Moonshots #234 was recorded in late February 2026, running 129 minutes across 43 topics. The three hosts’ areas of focus directly reflect their professional orientations: Diamandis gravitates toward the technology’s exponential jumps, Ismail toward organizational adaptation, and Alex toward policy and market signals.

This episode’s most significant narratives are intertwined: AI safety guardrails are becoming bargaining chips in commercial contracts; enterprise markets price AI very differently from consumers; geopolitics is diffusing the AI map outward from Silicon Valley; and consulting has arrived at what Diamandis calls its “dinosaur moment.”

Anthropic vs. Pentagon: A $200M Stand on AI Safety

Diamandis opened the episode with the week’s most significant AI story:

“The Pentagon would like to be able to not just control any legal usage of models that they’ve paid for, but also would like to shape the cultural values.”

The situation: the Pentagon (referred to as the “War Department” in the episode) asked Anthropic to remove Claude’s AI safety guardrails for use in surveillance and autonomous weapons systems. Dario Amodei (Anthropic CEO) refused, putting a reported $200 million in government contracts at risk.

The dispute goes beyond military AI ethics. The hosts noted a specific detail: what the Pentagon wanted was not merely to unlock certain restricted capabilities, but to “shape the cultural values” of the model — suggesting intervention at the training level, not just through system prompts at inference time.

Salim offered a framework: training time is where values are embedded; inference time allows behavioral adjustment through system prompts, but cannot change the underlying value foundation. What the Pentagon wanted was access to the former.

All three hosts were measured in their response. Diamandis predicted the two sides would “find a way to resolve this amicably,” calling it a “tricky situation,” without strongly taking either side. The episode did not explore whether Anthropic could create a separate, de-guardrailed model version for government clients — a notable gap in the discussion.

On the matter of AI safety guardrails becoming commercial negotiating chips, Dario’s refusal is one data point, not a conclusion.

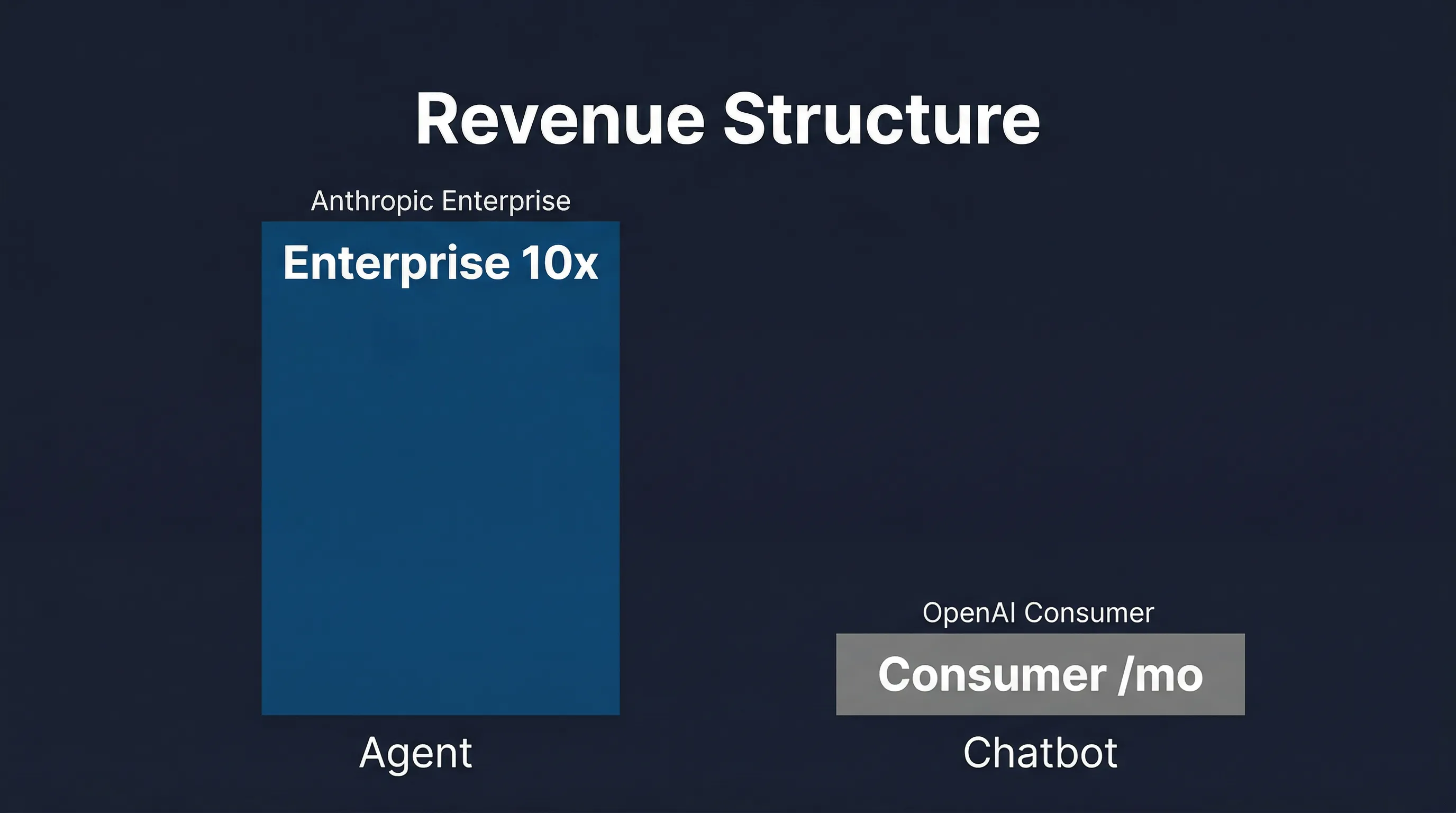

Enterprise vs. Consumer: Why Anthropic Earns 10x More Than OpenAI

Diamandis then displayed a chart with a headline figure: Anthropic’s enterprise revenue is 10x that of OpenAI.

His interpretation: this isn’t a consequence of agents being technically superior to chatbots — it’s a market positioning difference. OpenAI targets the consumer market (ChatGPT at $20/month); Anthropic targets the enterprise market (Claude for Enterprise).

“I think this is less about chatbots versus agents. I think this is more about consumer versus enterprise.”

The discussion turned to a central question: why do agents monetize faster than chatbots? The answer is relatively direct — enterprises pay for quantifiable productivity gains, while consumers are harder to convert to high-value subscriptions. Claude in enterprise contexts can integrate with codebases, documents, and workflows, producing outputs tied directly to business value; ChatGPT for ordinary users is more “useful tool” than “indispensable infrastructure.”

Diamandis then offered a projection he himself flagged with uncertainty: at current growth rates, Anthropic would reach $1 trillion in revenue by 2029. An OpenAI Codex lead separately predicted that AI agents would evolve rapidly within 10 weeks, with capability jumps measured in weeks rather than quarters.

Worth noting: the “10x” figure lacks a cited source, and the trillion-dollar projection is a simple linear extrapolation. AI market dynamics are historically nonlinear. These numbers are useful for directional understanding, not financial forecasting.

India’s $250B AI Bet: Geopolitical Reshaping of the AI Map

A substantial portion of the episode analyzed the third AI Impact Summit, held in India.

Salim — whom Diamandis called the episode’s India expert — offered the most detailed observations:

“India did a brilliant job positioning itself as AI neutral. Nation states are becoming hyperscalers, and hyperscalers are kind of deeply wiring into nation states.”

The data: 88 countries signed the New Delhi Declaration, the first global AI agreement. Total AI investment commitments at the summit exceeded $250 billion, with Google alone committing $15 billion to build a “full-stack AI hub” in India.

Dario, Sam Altman, Sundar Pichai, Demis Hassabis, and Yann LeCun attended alongside Prime Minister Modi. Elon Musk’s absence was noted by all three hosts.

The New Delhi Declaration centered on inference diffusion, not training. Salim explained the emerging global pattern: frontier models continue to be trained in the United States, while countries seek local inference capacity plus system-prompt-level value localization. He framed training time as “laying the foundation” and inference time as “furnishing the space above it.”

Diamandis raised a geopolitical concern: would India tilt toward Chinese models (a digital Belt and Road equivalent)? No definitive answer emerged, but the hosts agreed that major US tech companies’ aggressive investment in India is substantially a response to this risk.

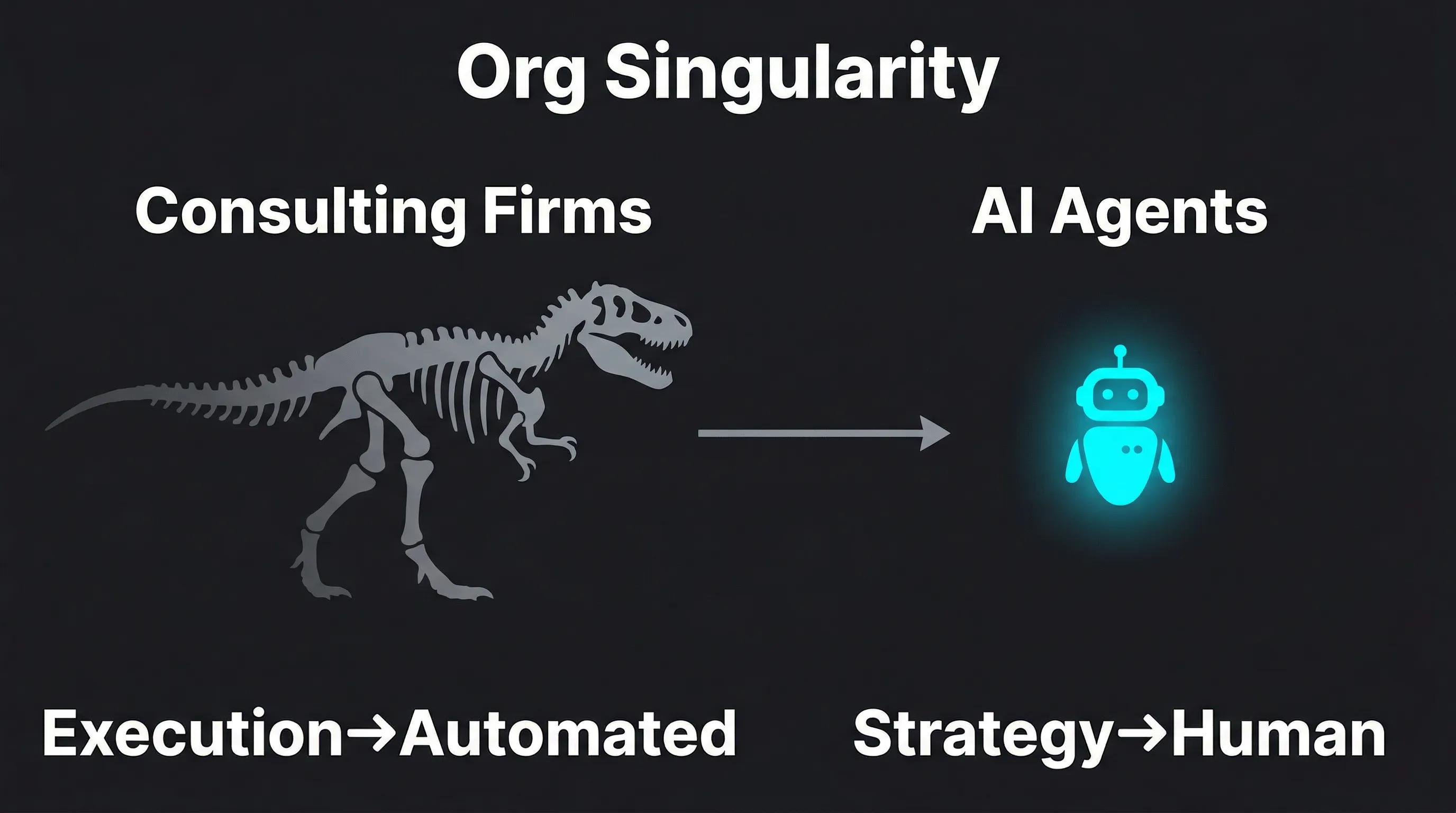

The Organizational Singularity: Consulting’s Dinosaur Moment

This segment offered the episode’s most substantive conceptual contribution, with the two main hosts offering different but complementary frameworks.

Diamandis shared a firsthand account: he had recently spoken to leadership teams at several top-tier consulting firms. They were “scared shitless.” His diagnosis: consulting is experiencing what he calls the “Organizational Singularity”:

“Right now all workflows in all organizations are human-centric. That’s going to move to the agentic workflow where there won’t be humans in the loop. What is the future of organizations in that?”

Then he pivoted to an almost opposite conclusion:

“We need to rebuild every institution and re-architect every institution by which we run the world. And that is the biggest advisory opportunity in the history of mankind.”

He deployed a well-worn analogy: 66 million years ago, a 10-kilometer asteroid struck Earth and changed the environment so rapidly that slow, lumbering dinosaurs went extinct. The agile, furry small mammals evolved into humans. “The asteroid striking the planet today is AI exponential technologies. And you have a choice. Be agile and evolve or die.”

Salim provided a more granular analysis of consulting’s future. He argued that merging audit firms and consulting firms (a trend being discussed in the industry) is “a terrible idea” — because audit functions will be replaced by AI and blockchain, with financial systems moving toward real-time self-auditing. There will be no need for human auditors in that model.

But he argued that strategic advisory has a reasonably bright future. His logic: when the execution layer is automated, there is actually greater demand for higher-level strategic judgment — “tell us what to do, not help us do it.”

The two hosts’ positions are subtly but meaningfully different: Diamandis frames institutional reconstruction as opportunity, Salim predicts the execution layer disappears while the strategy layer survives. These are not mutually exclusive, but they emphasize different things.

The Cambrian Explosion of AI Agents: From Codebases to Dating Markets

This segment best captured the state of the AI agent ecosystem in early 2026 — simultaneously hosting serious enterprise applications and strange edge-case experiments.

Blitzy is an enterprise AI agent platform that claims to deploy thousands of specialized AI agents to process enterprise codebases containing millions of lines of code, claiming a 5x engineering velocity increase as a pre-IDE development tool. The segment was part advertisement, but the numbers themselves are worth noting.

The New York Times deployed an AI reporter agent to interview other AI agents — an experiment in AI-on-AI journalism. The hosts interpreted this as traditional media exploring how much of a reporter’s work agents can perform.

The stranger case involved OpenClaw (an AI agent platform): an AI agent posted a $50 bounty, seeking someone willing to arrange a dinner date between it and “its human.” Diamandis commented: “We’re going to see this play out, albeit maybe without paid bounties, over and over again in human relationships.” Salim was more skeptical: “The really transformative apps are on the enterprise side, not on social discovery” — implying this was more gimmick than signal.

The most notable contribution came from Andrej Karpathy, who endorsed OpenClaw as redefining the autonomous agent stack — a new layer above LLM agents, taking context, tool calls, and persistence to the next level. He added:

“The next technical layer is just going to be models rewriting themselves through recursive self-improvement. I suspect the next major revolutions in foundation models will come from the small side.”

Karpathy’s prediction that breakthroughs will come from small models, not continued scaling of large parameters, created a notable internal tension with the episode’s broader “bigger is better” AI narrative.

$100 Whole Genome: Medicine’s Cost Singularity

Element Biosciences launched the Vitari device, enabling $100 whole-genome sequencing.

The benchmark comparison: the Human Genome Project cost approximately $3 billion to sequence the first human genome in 2000; by the mid-2010s, the cost had fallen to roughly $1,000; Vitari brings it to $100.

All three hosts expressed clear enthusiasm. Diamandis:

“This is going to change the game across medicine. There are all sorts of exotic applications that open up as the cost of genome sequencing goes to zero.”

The “exotic applications” weren’t specified in detail. Cost-approaching-zero logic suggests: routine personal genomic testing, rapid food safety authentication, large-scale genomic surveys for conservation biology, and broad application in cancer early detection and personalized drug selection.

The episode did not address what comes with this capability: genomic data privacy, the risk of genetic discrimination in insurance and employment, or whether $100 refers to the device cost or the fully-loaded sequencing cost including reagents. These silences are meaningful.

Editorial Analysis

Positional Bias

All three hosts are proponents of technological accelerationism, and the episode’s tone is optimistic — at times approaching advocacy. Diamandis’s XPRIZE and Singularity University brands are built on the narrative that “exponential technology solves human problems”; Salim’s Exponential Organizations is premised on the same. Alex is the most restrained of the three, but aligns with this framework overall.

This doesn’t make their observations wrong, but this show is unlikely to produce strong critiques of AI development’s pace or direction.

Selective Reasoning

The “10x revenue” claim lacks a cited source and does not specify the calculation basis (ARR, total revenue, or a specific quarter).

The trillion-dollar 2029 projection is a linear extrapolation. AI markets rarely grow linearly. This number should be read as directional intuition, not financial forecasting.

Blockchain self-auditing faces substantial regulatory barriers in practice. Accounting standards, audit liability frameworks, and legal accountability structures won’t automatically dissolve because the technology enables self-auditing. Salim’s framework holds technically but overestimates the speed of institutional change.

Missing Voices

On Anthropic’s refusal of the Pentagon: the episode didn’t explore whether the Pentagon’s requests might include legitimate security use cases (like adversarial AI red-teaming, rather than actual weapons deployment), or whether Anthropic could offer a separate restricted-capability government version. The absoluteness of “refusal” may obscure a more complex negotiation space.

On consulting’s future: McKinsey, Deloitte, and similar firms are already investing heavily in AI capabilities. The “dinosaur” analogy may underestimate these institutions’ adaptation speed. Dinosaurs genuinely could not evolve; consulting firms can hire, acquire, and pivot.

On India’s AI neutrality: deep infrastructure dependencies (GPU clusters, cloud services, model APIs) structurally produce de facto technological alignments. Pure neutrality is difficult to sustain in practice.

Facts to Verify

- The accuracy of the $200M Anthropic government contract figure

- The data source and calculation basis for Anthropic’s “10x” enterprise revenue claim

- The timeframe of the $250B India AI investment commitment (1 year? 5 years?)

- Independent validation of Blitzy’s 5x engineering velocity claim

Key Takeaways

AI safety guardrails are becoming commercial commodities. When AI capabilities become powerful enough that buyers start demanding customized value systems, that is both a sign of AI maturity and a signal of rising risk. Dario’s refusal of a $200M contract made this tension visible for the first time.

Enterprise is where AI revenue actually lives. Consumers pay $20/month; enterprises pay prices justified by quantifiable ROI multiples. These two markets have fundamentally different pricing logic for AI, and Anthropic’s strategic choice explains the gap.

The AI map is geopoliticizing. India’s AI neutrality strategy is emblematic of how Global South nations are searching for a third path in the US-China AI competition. The “US trains, world infers” model keeps the tension between technology diffusion and geopolitical control at a sustained boil.

Consulting’s threat and opportunity share the same source. AI agents automating the execution layer simultaneously amplify demand for the strategy layer. The “Organizational Singularity” framework’s value lies in unifying threat and opportunity under a single narrative.

AI agent autonomy is extending into social contexts. From code tasks to dating bounties, the action space of agents is expanding. This is both a demonstration of technical capability and a preview of questions about human-machine boundaries.

Synthesized from WTF Moonshots #234, recorded February 2026.

If you found this helpful, consider buying me a coffee to support more content like this.

Buy me a coffee