xAI MacroHard: What Is Elon Musk's 'Human Emulator' Actually Doing?

Why I Investigated This

It started with a three-hour Elon Musk interview on the Dwarkesh Patel podcast (February 5, 2026). The host repeatedly pressed Musk on xAI’s competitive strategy. Elon clearly didn’t want to say too much, but he dropped a very specific hint:

“I think I know the path to do this because it’s kind of the same path that Tesla used to create self-driving. Instead of driving a car, it’s driving a computer screen. It’s a self-driving computer, essentially.”

Then he cut to an ad break.

That phrase — “self-driving computer” — stuck with me. Shortly before, an xAI engineer named Sulaiman Ghori had been fired (or “voluntarily departed”) after revealing too much about an internal project called MacroHard on a podcast. He described in detail what xAI was actually building.

So I ran a systematic investigation: cross-referencing both podcasts, combined with Reddit/Twitter discussions and technical analysis articles, to piece together what xAI is really doing.

What Is MacroHard?

MacroHard (a play on Microsoft — “macro” + “hard” vs. “micro” + “soft”) is xAI’s internal codename project. The core goal: build a Human Emulator.

In plain terms: AI watches the screen, understands what’s on it, then operates keyboard and mouse — fully simulating how a human uses a computer. No API integration needed. It works with existing software exactly as a human employee would.

If Tesla’s Optimus robot emulates humans in the physical world, MacroHard does the same in the digital world.

What Elon Hinted vs. What Sully Leaked

This is the most revealing part. Information Elon deliberately withheld in his interview, Sully laid out almost entirely:

| Topic | Elon’s Statement | Sully’s Leak |

|---|---|---|

| Methodology | “Same path as Tesla self-driving” | Multiple novel architectures in parallel, at least one not based on existing work |

| Speed | Not mentioned | 1.5x to 8x+ human operation speed |

| Deployment | Not mentioned | Plans to use idle HW4 chips in 4M North American Teslas for distributed inference |

| Internal testing | Not mentioned | Virtual employees already running inside xAI, colleagues think they’re real people |

| Iteration speed | Not mentioned | Models updated daily, sometimes multiple times per day from pre-training |

| Scale target | Hinted at “thousands to tens of thousands” | 1,000 to 1,000,000 virtual employees |

No wonder Sully was no longer at xAI days after the interview dropped.

Technical Architecture: Self-Driving Computer

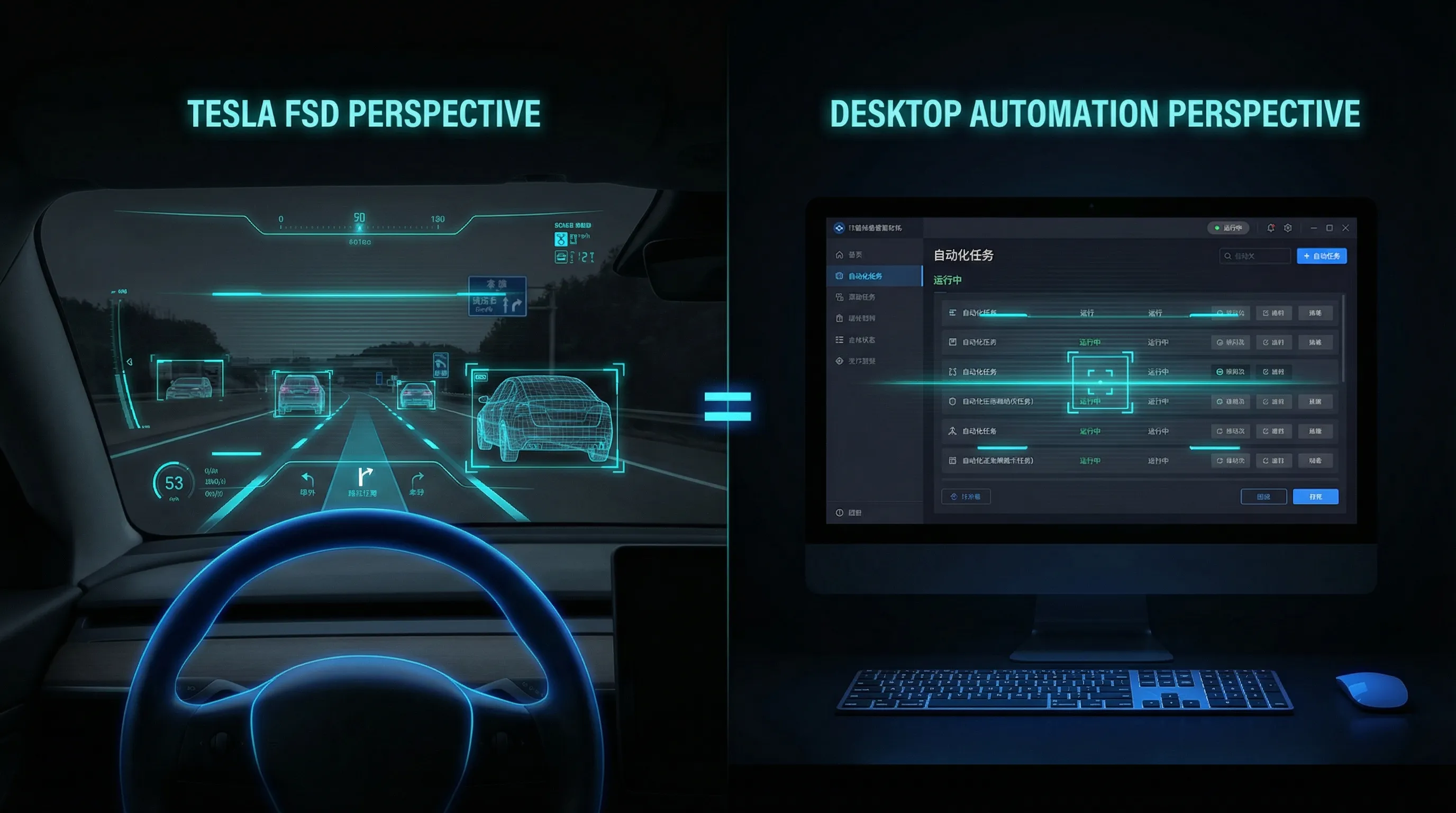

MacroHard’s core architecture maps directly onto Tesla FSD:

| Tesla FSD | MacroHard |

|---|---|

| Camera watches the road | Screenshots capture the screen |

| Understands road conditions | Understands UI and workflows |

| Controls steering wheel | Controls keyboard and mouse |

| Trained on human driving data | Trained on human screen operation data |

| Fleet OTA updates | Multiple daily model updates |

The most counterintuitive design decision: xAI chose small models + blazing fast inference over the large model + long reasoning chain approach used by other labs. The result is 8x human speed — one virtual employee does the work of eight people.

The deployment plan is even more radical. North America has roughly 4 million Teslas; about half have HW4 chips, sitting idle 70-80% of the time while charging. xAI plans to pay owners to lease idle compute, running Human Emulators directly on the cars. No data centers needed — pure software deployment.

Community Reaction

Community sentiment splits roughly 40% supportive, 30% skeptical, 30% wait-and-see.

Supporters call this the “last missing piece” in AI — once AI can operate a computer like a human, virtually all digital work becomes automatable.

Skeptics point out that FSD deals with relatively standardized road scenarios, while screen UIs are vastly more diverse. One commenter wrote: “You’ve invented the world’s most expensive intern with a GPU bill.”

Pragmatists note that Anthropic’s Computer Use and OpenAI’s Operator already do similar things. MacroHard’s differentiation isn’t methodology — it’s execution scale and speed.

Can You Replicate This Yourself?

This was my main question. The answer: the core interaction pattern, yes. The scale effects, no.

What You Can Replicate

Browser Use (open source, 50k+ GitHub stars) + Claude Sonnet API gives you a working browser automation agent for $5-20/month. It can search, fill forms, extract data, and handle multi-tab workflows — covering most daily browser-based work.

For full desktop control, the Anthropic Computer Use API provides the complete screenshot → understanding → keyboard/mouse pipeline at $20-50/month.

What You Can’t Replicate

- Data flywheel: xAI has massive human operation data → better models → more deployment → more data. This loop can’t be bootstrapped individually.

- 8x speed: API latency + inference = 2-5 seconds per step, far from 8x human speed.

- Novel architectures: xAI is developing potentially non-Transformer architectures — this requires their level of compute to experiment with.

- Million-scale concurrency: An infrastructure problem, not a personal one.

Best Entry Point

Browser Use + Claude Sonnet, focused on your 3 most repetitive workflows. Most knowledge work happens in the browser. Going narrow and deep beats going wide and shallow by 100x. That’s your personal MacroHard.

Conclusion

MacroHard isn’t science fiction, and it isn’t a slide deck. Virtual employees are already running inside xAI.

Its real moat isn’t the technical methodology (screenshot → understand → operate — Anthropic already does this). It’s three stacked flywheels: massive data, extreme iteration speed, and the Tesla fleet compute network.

But here’s the good news for individual developers: the core methodology has been replicated by the open-source community. You can start for $5-20/month. You don’t need to wait for xAI to sell you a product — you can start building now.

📊 View the Full Research Report →

This article is based on a comprehensive investigation of Elon Musk’s interview (Dwarkesh Patel Podcast, 2026.2.5), Sulaiman Ghori’s interview (Relentless Podcast), Reddit/Twitter community discussions, and multiple technical analysis articles.

If you found this helpful, consider buying me a coffee to support more content like this.

Buy me a coffee